Linear Algebra is the branch of mathematics that focuses on the study of vectors, vector spaces, and linear transformations. It deals with linear equations, linear functions, and their representations through matrices and determinants. It has a wide range of application in Physics and Mathematics. It is the basic concept for machine learning and data science. We have explained the Linear Algebra, types of Linear Algebra.

Linear Algebra

Let’s learn about Linear Algebra, like linear function, including its branches, formulas, and examples.

What is Linear Algebra?

Linear Algebra is a branch of Mathematics that deals with matrices, vectors, finite and infinite spaces. It is the study of vector spaces, linear equations, linear functions, and matrices.

Linear Algebra Equations

The general linear equation is represented as u1x1 + u2x2+…..unxn= v

Where,

- u’s – represents the coefficients

- x’s – represents the unknowns

- v – represents the constant

There is a collection of equations called a System of linear algebraic equations. It obeys the linear function such as –

(x1,……..xn) → u1x1+……….+unxn

Linear Algebra Topics

Below is the list of important topics in Linear Algebra.

- Matrix inverses and determinants

- Linear transformations

- Singular value decomposition

- Orthogonal matrices

- Mathematical operations with matrices (i.e. addition, multiplication)

- Projections

- Solving systems of equations with matrices

- Eigenvalues and eigenvectors

- Euclidean vector spaces

- Positive-definite matrices

- Linear dependence and independence

- The foundational concepts essential for understanding linear algebra, detailed here, include:

- Linear Functions

- Vector spaces

- Matrix

These foundational ideas are interconnected, allowing for the mathematical representation of a system of linear equations. Generally, vectors are entities that can be combined, and linear functions refer to vector operations that encompass vector combination.

Branches of Linear Algebra

Linear Algebra is divided into different branches based on the difficulty level of topics, which are,

- Elementary Linear Algebra

- Advanced Linear Algebra

- Applied Linear Algebra

Elementary Linear Algebra

Elementary Linear algebra covers the topics of basic linear algebra such as Scalars and Vectors, Matrix and matrix operation, etc.

Linear Equations

Linear equations form the basis of linear algebra and are equations of the first order. These equations represent straight lines in geometry and are characterized by constants and variables without exponents or products of variables. Solving systems of linear equations involves finding the values of the variables that satisfy all equations simultaneously.

A linear equation is the simplest form of equation in algebra, representing a straight line when plotted on a graph.

Example: 2x + 3x = 6 is a linear equation. If you have two such equations, like 2x + 3y = 6, and 4x + 6y =12, solving them together would give you the point where the two lines intersect.

Advanced Linear Algebra

Advanced linear algebra mostly covers all the advanced topics related to linear algebra such as Linear function, Linear transformation, Eigenvectors, and Eigenvalues, etc.

Eigenvalues and Eigenvectors

Eigenvalues and eigenvectors are fundamental concepts in linear algebra. It offers deep insights into the properties of linear transformations. An eigenvector of a square matrix is a non-zero vector that, when the matrix multiplies it, results in a scalar multiple of itself. This scalar is known as the eigenvalue associated with the eigenvector. They are essential in various applications, including stability analysis, quantum mechanics, and the study of dynamical systems.

Consider a transformation that changes the direction or length of vectors, except for some special vectors that only get stretched or shrunk. These special vectors are eigenvectors, and the factor by which they are stretched or shrunk is the eigenvalue.

Example: For the matrix A = [2, 0, 0, 3], the vector v = 1,0 is an eigenvector because Av = 2v, and 2 is the eigenvalue.

Singular Value Decomposition

Singular Value Decomposition (SVD) is a powerful mathematical technique used in signal processing, statistics, and machine learning. It decomposes a matrix into three other matrices, where one represents the rotation, another the scaling, and the third the final rotation. It’s essential for identifying the intrinsic geometric structure of data.

Vector Space in Linear Algebra

A vector space (or linear space) is a collection of vectors, which may be added together and multiplied (“scaled”) by numbers, called scalars. Scalars are often real numbers, but can also be complex numbers. Vector spaces are central to the study of linear algebra and are used in various scientific fields.

Linear Map

A linear map (or linear transformation) is a mapping between two vector spaces that preserves the operations of vector addition and scalar multiplication. The concept is central to linear algebra and has significant implications in geometry and abstract algebra.

A linear map is a way of moving vectors around in a space that keeps the grid lines parallel and evenly spaced.

Example: Scaling objects in a video game world without changing their basic shape is like applying a linear map.

Positive Definite Matrices

A positive definite matrix is a symmetric matrix where all its eigenvalues are positive. These matrices are significant in optimisation problems, as they ensure the existence of a unique minimum in quadratic forms.

Example: The matrix A = [2, 0, 0, 2] is positive definite because it always produces positive values for any non-zero vector.

Matrix Exponential

The matrix exponential is a function on square matrices analogous to the exponential function for real numbers. It is used in solving systems of linear differential equations, among other applications in physics and engineering.

Matrix exponentials stretch or compress spaces in ways that depend smoothly on time, much like how interest grows continuously in a bank account.

Example: The exponential of the matrix A = [0, −1, 1, 0] represents rotations, where the amount of rotation depends on the “time” parameter.

Linear Computations

Linear computations involve numerical methods for solving linear algebra problems, including systems of linear equations, eigenvalues, and eigenvectors calculations. These computations are essential in computer simulations, optimisations, and modelling.

These are techniques for crunching numbers in linear algebra problems, like finding the best-fit line through a set of points or solving systems of equations quickly and accurately.

Linear Independence

A set of vectors is linearly independent if no vector in the set is a linear combination of the others. The concept of linear independence is central to the study of vector spaces, as it helps define bases and dimension.

Vectors are linearly independent if none of them can be made by combining the others. It’s like saying each vector brings something unique to the table that the others don’t.

Example: 1,0 and 0,1 are linearly independent in 2D space because you can’t create one of these vectors by scaling or adding the other.

Linear Subspace

A linear subspace (or simply subspace) is a subset of a vector space that is closed under vector addition and scalar multiplication. A subspace is a smaller space that lies within a larger vector space, following the same rules of vector addition and scalar multiplication.

Example: The set of all vectors of the form a, 0 in 2D space is a subspace, representing all points along the x-axis.

Applied Linear Algebra

In Applied Linear Algebra, the topics covered are generally the practical implications of Elementary and advanced linear Algebra topics such as the Complement of a matrix, matrix factorization and norm of vectors, etc.

Linear Programming

Linear programming is a method to achieve the best outcome in a mathematical model whose requirements are represented by linear relationships. It is widely used in business and economics to maximize profit or minimize cost while considering constraints.

This is a technique for optimizing (maximizing or minimizing) a linear objective function, subject to linear equality and inequality constraints. It’s like planning the best outcome under given restrictions.

Example: Maximizing profit in a business while considering constraints like budget, material costs, and labor.

Linear Equation Systems

Systems of linear equations involve multiple linear equations that share the same set of variables. The solution to these systems is the set of values that satisfy all equations simultaneously, which can be found using various methods, including substitution, elimination, and matrix operations.

Example: Finding the intersection point of two lines represented by two equations.

Gaussian Elimination

Gaussian elimination is a systematic method for solving systems of linear equations. It involves applying a series of operations to transform the system’s matrix into its row echelon form or reduced row echelon form, making it easier to solve for the variables. It is a step-by-step procedure to simplify a system of linear equations into a form that’s easier to solve.

Example: Systematically eliminating variables in a system of equations until each equation has only one variable left to solve for.

Vectors in Linear Algebra

In linear algebra, vectors are fundamental mathematical objects that represent quantities that have both magnitude and direction.

- Vectors operations like addition and scalar multiplication are mainly used concepts in linear algebra. They can be used to solve systems of linear equations and represent linear transformation, and perform matrix operations such as multiplication and inverse matrices.

- The representation of many physical processes’ magnitude and direction using vectors, a fundamental component of linear algebra, is essential.

- In linear algebra, vectors are elements of a vector space that can be scaled and added. Essentially, they are arrows with a length and direction.

Linear Function

A formal definition of a linear function is provided below:

f(ax) = af(x), and f(x + y) = f(x) + f(y)

where a is a scalar, f(x) and f(y) are vectors in the range of f, and x and y are vectors in the domain of f.

A linear function is a type of function that maintains the properties of vector addition and scalar multiplication when mapping between two vector spaces. Specifically a function T: V ->W is considered linear if it satisfies two key properties:

| Property | Description | Equation |

|---|

| Additive Property | A linear transformation’s ability to preserve vector addition. | T(u+v)=T(u) +T(v) |

| Homogeneous Property | A linear transformation’s ability to preserve scalar multiplication. | T(cu)=cT(u) |

- V and W: Vector spaces

- u and v: Vectors in vector space V

- c: Scalar

- T: Linear transformation from V to W

- The additional property requires that the function T preserves the vector addition operation, meaning that the image of the sum of two vectors is equal to the sum of two images of each individual vector.

For example, we have a linear transformation T that takes a two-dimensional vector (x, y) as input and outputs a new two-dimensional vector (u, v) according to the following rule:

T(x, y) = (2x + y, 3x – 4y)

To verify that T is a linear transformation, we need to show that it satisfies two properties:

- Additivity: T(u + v) = T(u) + T(v)

- Homogeneity: T(cu) = cT(u)

Let’s take two input vectors (x1, y1) and (x2, y2) and compute their images under T:

- T(x1, y1) = (2x1 + y1, 3x1 – 4y1)

- T(x2, y2) = (2x2 + y2, 3x2 – 4y2)

Now let’s compute the image of their sum:

T(x1 + x2, y1 + y2) = (2(x1 + x2) + (y1 + y2), 3(x1 + x2) – 4(y1 + y2)) = (2x1 + y1 + 2x2 + y2, 3x1 + 3x2 – 4y1 – 4y2) = (2x1 + y1, 3x1 – 4y1) + (2x2 + y2, 3x2 – 4y2) = T(x1, y1) + T(x2, y2)

So T satisfies the additivity property.

Now let’s check the homogeneity property. Let c be a scalar and (x, y) be a vector:

T(cx, cy) = (2(cx) + cy, 3(cx) – 4(cy)) = (c(2x) + c(y), c(3x) – c(4y)) = c(2x + y, 3x – 4y) = cT(x, y)

So T also satisfies the homogeneity property. Therefore, T is a linear transformation.

Linear Algebra Matrix

- A linear matrix in algebra is a rectangular array of integers organized in rows and columns in linear algebra. The letters a, b, c, and other similar letters are commonly used to represent the integers that make up a matrix’s entries.

- Matrices are often used to represent linear transformation, such as scaling, rotation, and reflection.

- Its size is determined by the rows and columns that are present.

- A matrix has three rows and two columns, for instance. A matrix is referred to as be 3×2 matrix, for instance, if it contains three rows and two columns.

- Matrix basically works on operations including addition, subtraction, and multiplication.

- The appropriate elements are simply added or removed when matrices are added or subtracted.

- Scalar multiplication involves multiplying every entry in the matrix by a scalar(a number).

- Matrix multiplication is a more complex operation that involves multiplying and adding certain entries in the matrices.

- The number of columns and rows in the matrix determines its size. For instance, a matrix with 4 rows and 2 columns is known as a 4×2 matrix. The entries in the matrix are integers, and they are frequently represented by letters like u, v, and w.

For example: Let’s consider a simple example to understand more, suppose we have two vectors, v1, and v2 in a two-dimensional space. We can represent these vectors as a column matrix, such as:

v1 =  , v2 =

, v2 =

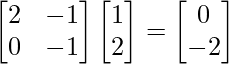

Now we will apply a linear transformation that doubles the value of the first component and subtracts the value of the second component. Now we can represent this transformation as a 2×2 linear matrix A

A =

To apply this to vector v1, simply multiply the matrix A with vector v1

Av1 =

The resulting vector, [0,-2] is the transformed version of v1. Similarly, we can apply the same transformation to v2

Av2 =

The resulting vector, [3,-4] is the transformed version of v2.

Numerical Linear Algebra

Numerical linear algebra, also called applied linear algebra, explores how matrix operations can solve real-world problems using computers. It focuses on creating efficient algorithms for continuous mathematics tasks. These algorithms are vital for solving problems like least-square optimization, finding Eigenvalues, and solving systems of linear equations. In numerical linear algebra, various matrix decomposition methods such as Eigen decomposition, Single value decomposition, and QR factorization are utilized to tackle these challenges.

Linear Algebra Applications

Linear algebra is ubiquitous in science and engineering, providing the tools for modelling natural phenomena, optimising processes, and solving complex calculations in computer science, physics, economics, and beyond.

Linear algebra, with its concepts of vectors, matrices, and linear transformations, serves as a foundational tool in numerous fields, enabling the solving of complex problems across science, engineering, computer science, economics, and more. Following are some specific applications of linear algebra in real-world.

1. Computer Graphics and Animation

Linear algebra is indispensable in computer graphics, gaming, and animation. It helps in transforming the shapes of objects and their positions in scenes through rotations, translations, scaling, and more. For instance, when animating a character, linear transformations are used to rotate limbs, scale objects, or shift positions within the virtual world.

2. Machine Learning and Data Science

In machine learning, linear algebra is at the heart of algorithms used for classifying information, making predictions, and understanding the structures within data. It’s crucial for operations in high-dimensional data spaces, optimizing algorithms, and even in the training of neural networks where matrix and tensor operations define the efficiency and effectiveness of learning.

3. Quantum Mechanics

The state of quantum systems is described using vectors in a complex vector space. Linear algebra enables the manipulation and prediction of these states through operations such as unitary transformations (evolution of quantum states) and eigenvalue problems (energy levels of quantum systems).

4. Cryptography

Linear algebraic concepts are used in cryptography for encoding messages and ensuring secure communication. Public key cryptosystems, such as RSA, rely on operations that are easy to perform but extremely difficult to reverse without the key, many of which involve linear algebraic computations.

5. Control Systems

In engineering, linear algebra is used to model and design control systems. The behavior of systems, from simple home heating systems to complex flight control mechanisms, can be modeled using matrices that describe the relationships between inputs, outputs, and the system’s state.

6. Network Analysis

Linear algebra is used to analyze and optimize networks, including internet traffic, social networks, and logistical networks. Google’s PageRank algorithm, which ranks web pages based on their links to and from other sites, is a famous example that uses the eigenvectors of a large matrix representing the web.

7. Image and Signal Processing

Techniques from linear algebra are used to compress, enhance, and reconstruct images and signals. Singular value decomposition (SVD), for example, is a method to compress images by identifying and eliminating redundant information, significantly reducing the size of image files without substantially reducing quality.

8. Economics and Finance

Linear algebra models economic phenomena, optimizes financial portfolios, and evaluates risk. Matrices are used to represent and solve systems of linear equations that model supply and demand, investment portfolios, and market equilibrium.

9. Structural Engineering

In structural engineering, linear algebra is used to model structures, analyze their stability, and simulate how forces and loads are distributed throughout a structure. This helps engineers design buildings, bridges, and other structures that can withstand various stresses and strains.

10. Robotics

Robots are designed using linear algebra to control their movements and perform tasks with precision. Kinematics, which involves the movement of parts in space, relies on linear transformations to calculate the positions, rotations, and scaling of robot parts.

Linear Algebra Problems

Here are some solved questions on Linear Algebra to better your understanding of the concept:

Q1: Find the sum of the two vectors  = 2i + 3j + 5k and

= 2i + 3j + 5k and  = -i + 2j + k

= -i + 2j + k

Solution:

= (2-1)i + (2 + 3)j + (5 + 1)k = i + 5j + 6k

= (2-1)i + (2 + 3)j + (5 + 1)k = i + 5j + 6k

Q2: Find the dot product of  = -2i + j + 3k and

= -2i + j + 3k and  = i – 2j + k

= i – 2j + k

Solution:

= -2i(i – 2j + k) + j(i – 2j + k) + 3k(i – 2j + k)

= -2i(i – 2j + k) + j(i – 2j + k) + 3k(i – 2j + k)

= -2i -2j + 3k

Q3: Find the solution of x + 2y = 3 and 3x + y = 5

Solution:

From x + 2y = 3 we get x = 3 – 2y

Putting this value of x in the second equation we get

3(3 – 2y) + y = 5

⇒ 9 – 6y + y = 5

⇒ 9 – 5y = 5

⇒ -5y = -4

⇒ y = 4/5

Putting this value of y in 1st equation we get

x + 2(4/5) = 3

⇒ x = 3 – 8/5

⇒ x = 7/5

Conclusion of Linear Algebra

Linear algebra is a branch of mathematics that deals with vector spaces and linear mappings between these spaces. Linear algebra serves as a foundational pillar in mathematics with wide-ranging applications across numerous fields. Its concepts, including vectors, matrices, eigenvalues, and eigenvectors, provide powerful tools for solving systems of equations, analyzing geometric transformations, and understanding fundamental properties of linear mappings.

The versatility of linear algebra is evident in its application in diverse areas such as physics, engineering, computer science, economics, and more.

Linear Algebra – FAQs

What is Linear Algebra?

Linear Algebra is a branch of mathematics focusing on the study of vectors, vector spaces, linear mappings, and systems of linear equations. It includes the analysis of lines, planes, and subspaces.

Why is Linear Algebra Important?

Linear Algebra is fundamental in almost all areas of mathematics and is widely applied in physics, engineering, computer science, economics, and more, due to its ability to systematically solve systems of linear equations.

What are Vectors and Vector Spaces?

Vectors are objects that can be added together and multiplied (“scaled”) by numbers, called scalars. Vector spaces are collections of vectors, which can be added together and multiplied by scalars, following certain rules.

What are Eigenvalues and Eigenvectors?

Eigenvalues and eigenvectors are concepts in Linear Algebra where, given a linear transformation represented by a matrix, an eigenvector does not change direction under that transformation, and the eigenvalue represents how the eigenvector was scaled during the transformation.

What is Singular Value Decomposition?

Singular Value Decomposition (SVD) is a method of decomposing a matrix into three simpler matrices, providing insights into the properties of the original matrix, such as its rank, range, and null space.

How Can Linear Algebra Solve Systems of Equations?

Linear Algebra solves systems of linear equations using methods like substitution, elimination, and matrix operations (e.g., inverse matrices), allowing for efficient solutions to complex problems.

What is the Difference Between Linear Dependence and Independence?

Vectors are linearly dependent if one vector can be expressed as a linear combination of the others. If no vector in the set can be written in this way, the vectors are linearly independent.

What is Linear Algebra in Real Life?

Linear algebra plays an important role to determine unknown quantities. Linear Algebra basically used for calculating speed, Distance, or Time. It is also used for projecting a three-dimensional view into a two-dimensional plane, handled by linear maps. It is also used to create ranking algorithms for search engines such as Google.

How is Linear Algebra used in Engineering?

Linear Algebra is used in Engineering for finding the unknown values, and defining various parameters of any functions.

Share your thoughts in the comments

Please Login to comment...