Software Testing Techniques

Last Updated :

06 Dec, 2023

Software testing techniques are methods used to design and execute tests to evaluate software applications. The following are common testing techniques:

- Manual testing – Involves manual inspection and testing of the software by a human tester.

- Automated testing – Involves using software tools to automate the testing process.

- Functional testing – Tests the functional requirements of the software to ensure they are met.

- Non-functional testing – Tests non-functional requirements such as performance, security, and usability.

- Unit testing – Tests individual units or components of the software to ensure they are functioning as intended.

- Integration testing – Tests the integration of different components of the software to ensure they work together as a system.

- System testing – Tests the complete software system to ensure it meets the specified requirements.

- Acceptance testing – Tests the software to ensure it meets the customer’s or end-user’s expectations.

- Regression testing – Tests the software after changes or modifications have been made to ensure the changes have not introduced new defects.

- Performance testing – Tests the software to determine its performance characteristics such as speed, scalability, and stability.

- Security testing – Tests the software to identify vulnerabilities and ensure it meets security requirements.

- Exploratory testing – A type of testing where the tester actively explores the software to find defects, without following a specific test plan.

- Boundary value testing – Tests the software at the boundaries of input values to identify any defects.

- Usability testing – Tests the software to evaluate its user-friendliness and ease of use.

- User acceptance testing (UAT) – Tests the software to determine if it meets the end-user’s needs and expectations.

Software testing techniques are the ways employed to test the application under test against the functional or non-functional requirements gathered from business. Each testing technique helps to find a specific type of defect. For example, Techniques that may find structural defects might not be able to find the defects against the end-to-end business flow. Hence, multiple testing techniques are applied in a testing project to conclude it with acceptable quality.

Principles Of Testing

Below are the principles of software testing:

- All the tests should meet the customer’s requirements.

- To make our software testing should be performed by a third party.

- Exhaustive testing is not possible. As we need the optimal amount of testing based on the risk assessment of the application.

- All the tests to be conducted should be planned before implementing it.

- It follows the Pareto rule (80/20 rule) which states that 80% of errors come from 20% of program components.

- Start testing with small parts and extend it to large parts.

Types Of Software Testing Techniques

There are two main categories of software testing techniques:

- Static Testing Techniques are testing techniques that are used to find defects in an application under test without executing the code. Static Testing is done to avoid errors at an early stage of the development cycle thus reducing the cost of fixing them.

- Dynamic Testing Techniques are testing techniques that are used to test the dynamic behaviour of the application under test, that is by the execution of the code base. The main purpose of dynamic testing is to test the application with dynamic inputs- some of which may be allowed as per requirement (Positive testing) and some are not allowed (Negative Testing).

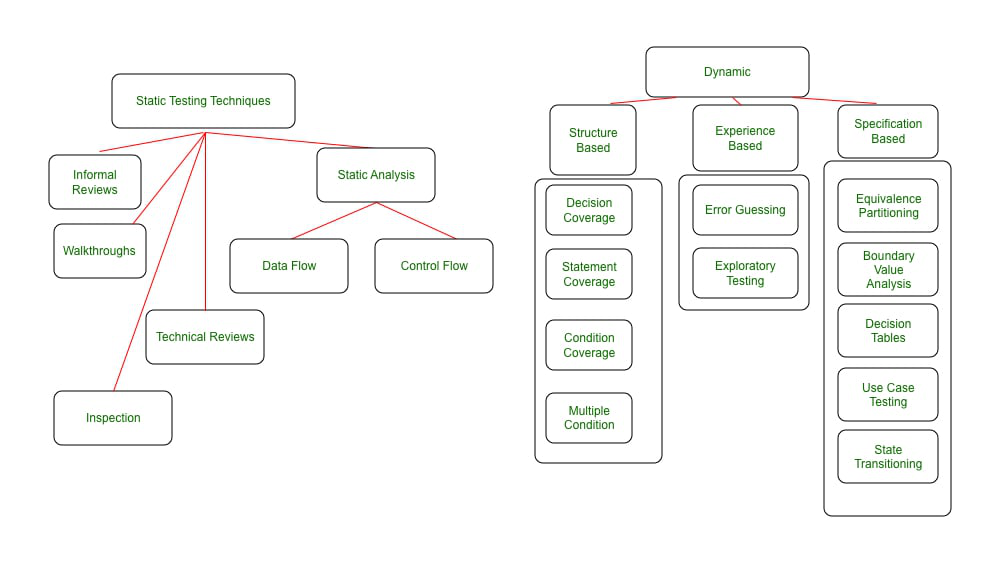

Each testing technique has further types as showcased in the below diagram. Each one of them will be explained in detail with examples below.

Testing Techniques

Static Testing Techniques

As explained earlier, Static Testing techniques are testing techniques that do not require the execution of a code base. Static Testing Techniques are divided into two major categories:

- Reviews: They can range from purely informal peer reviews between two developers/testers on the artifacts (code/test cases/test data) to formal Inspections which are led by moderators who can be internal/external to the organization.

- Peer Reviews: Informal reviews are generally conducted without any formal setup. It is between peers. For Example- Two developers/Testers review each other’s artifacts like code/test cases.

- Walkthroughs: Walkthrough is a category where the author of work (code or test case or document under review) walks through what he/she has done and the logic behind it to the stakeholders to achieve a common understanding or for the intent of feedback.

- Technical review: It is a review meeting that focuses solely on the technical aspects of the document under review to achieve a consensus. It has less or no focus on the identification of defects based on reference documentation. Technical experts like architects/chief designers are required to do the review. It can vary from Informal to fully formal.

- Inspection: Inspection is the most formal category of reviews. Before the inspection, the document under review is thoroughly prepared before going for an inspection. Defects that are identified in the Inspection meeting are logged in the defect management tool and followed up until closure. The discussion on defects is avoided and a separate discussion phase is used for discussions, which makes Inspections a very effective form of review.

- Static Analysis: Static Analysis is an examination of requirement/code or design to identify defects that may or may not cause failures. For Example- Review the code for the following standards. Not following a standard is a defect that may or may not cause a failure. Many tools for Static Analysis are mainly used by developers before or during Component or Integration Testing. Even Compiler is a Static Analysis tool as it points out incorrect usage of syntax, and it does not execute the code per se. There are several aspects to the code structure – Namely Data flow, Control flow, and Data Structure.

- Data Flow: It means how the data trail is followed in a given program – How data gets accessed and modified as per the instructions in the program. By Data flow analysis, you can identify defects like a variable definition that never got used.

- Control flow: It is the structure of how program instructions get executed i.e. conditions, iterations, or loops. Control flow analysis helps to identify defects such as Dead code i.e. a code that never gets used under any condition.

- Data Structure: It refers to the organization of data irrespective of code. The complexity of data structures adds to the complexity of code. Thus, it provides information on how to test the control flow and data flow in a given code.

Dynamic Testing Techniques

Dynamic techniques are subdivided into three categories:

1. Structure-based Testing:

These are also called White box techniques. Structure-based testing techniques are focused on how the code structure works and test accordingly. To understand Structure-based techniques, we first need to understand the concept of code coverage.

Code Coverage is normally done in Component and Integration Testing. It establishes what code is covered by structural testing techniques out of the total code written. One drawback of code coverage is that- it does not talk about code that has not been written at all (Missed requirement), There are tools in the market that can help measure code coverage.

There are multiple ways to test code coverage:

1. Statement coverage: Number of Statements of code exercised/Total number of statements. For Example, if a code segment has 10 lines and the test designed by you covers only 5 of them then we can say that statement coverage given by the test is 50%.

2. Decision coverage: Number of decision outcomes exercised/Total number of Decisions. For Example, If a code segment has 4 decisions (If conditions) and your test executes just 1, then decision coverage is 25%

3. Conditional/Multiple condition coverage: It has the aim to identify that each outcome of every logical condition in a program has been exercised.

2. Experience-Based Techniques:

These are techniques for executing testing activities with the help of experience gained over the years. Domain skill and background are major contributors to this type of testing. These techniques are used majorly for UAT/business user testing. These work on top of structured techniques like Specification-based and Structure-based, and they complement them. Here are the types of experience-based techniques:

1. Error guessing: It is used by a tester who has either very good experience in testing or with the application under test and hence they may know where a system might have a weakness. It cannot be an effective technique when used stand-alone but is helpful when used along with structured techniques.

2. Exploratory testing: It is hands-on testing where the aim is to have maximum execution coverage with minimal planning. The test design and execution are carried out in parallel without documenting the test design steps. The key aspect of this type of testing is the tester’s learning about the strengths and weaknesses of an application under test. Similar to error guessing, it is used along with other formal techniques to be useful.

3. Specification-based Techniques:

This includes both functional and non-functional techniques (i.e. quality characteristics). It means creating and executing tests based on functional or non-functional specifications from the business. Its focus is on identifying defects corresponding to given specifications. Here are the types of specification-based techniques:

1. Equivalence partitioning: It is generally used together and can be applied to any level of testing. The idea is to partition the input range of data into valid and non-valid sections such that one partition is considered “equivalent”. Once we have the partitions identified, it only requires us to test with any value in a given partition assuming that all values in the partition will behave the same. For example, if the input field takes the value between 1-999, then values between 1-999 will yield similar results, and we need NOT test with each value to call the testing complete.

2. Boundary Value Analysis (BVA): This analysis tests the boundaries of the range- both valid and invalid. In the example above, 0,1,999, and 1000 are boundaries that can be tested. The reasoning behind this kind of testing is that more often than not, boundaries are not handled gracefully in the code.

3. Decision Tables: These are a good way to test the combination of inputs. It is also called a Cause-Effect table. In layman’s language, one can structure the conditions applicable for the application segment under test as a table and identify the outcomes against each one of them to reach an effective test.

- It should be taken into consideration that there are not too many combinations so the table becomes too big to be effective.

- Take an example of a Credit Card that is issued if both credit score and salary limit are met. This can be illustrated in below decision table below:

Decision Table

4. Use case-based Testing: This technique helps us to identify test cases that execute the system as a whole- like an actual user (Actor), transaction by transaction. Use cases are a sequence of steps that describe the interaction between the Actor and the system. They are always defined in the language of the Actor, not the system. This testing is most effective in identifying integration defects. Use case also defines any preconditions and postconditions of the process flow. ATM example can be tested via use case:

Use case-based Testing

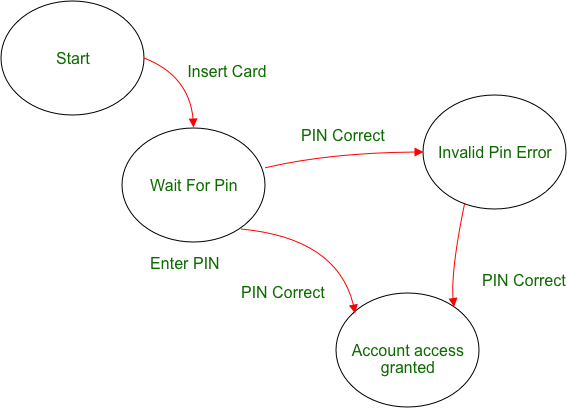

5. State Transition Testing: It is used where an application under test or a part of it can be treated as FSM or finite state machine. Continuing the simplified ATM example above, We can say that ATM flow has finite states and hence can be tested with the State transition technique. There are 4 basic things to consider –

- States a system can achieve

- Events that cause the change of state

- The transition from one state to another

- Outcomes of change of state

A state event pair table can be created to derive test conditions – both positive and negative.

State Transition

Advantages of software testing techniques:

- Improves software quality and reliability – By using different testing techniques, software developers can identify and fix defects early in the development process, reducing the risk of failure or unexpected behaviour in the final product.

- Enhances user experience – Techniques like usability testing can help to identify usability issues and improve the overall user experience.

- Increases confidence – By testing the software, developers, and stakeholders can have confidence that the software meets the requirements and works as intended.

- Facilitates maintenance – By identifying and fixing defects early, testing makes it easier to maintain and update the software.

- Reduces costs – Finding and fixing defects early in the development process is less expensive than fixing them later in the life cycle.

Disadvantages of software testing techniques:

- Time-consuming – Testing can take a significant amount of time, particularly if thorough testing is performed.

- Resource-intensive – Testing requires specialized skills and resources, which can be expensive.

- Limited coverage – Testing can only reveal defects that are present in the test cases, and defects can be missed.

- Unpredictable results – The outcome of testing is not always predictable, and defects can be hard to replicate and fix.

- Delivery delays – Testing can delay the delivery of the software if testing takes longer than expected or if significant defects are identified.

- Automated testing limitations – Automated testing tools may have limitations, such as difficulty in testing certain aspects of the software, and may require significant maintenance and updates.

Share your thoughts in the comments

Please Login to comment...