Word Embedding is an important term in Natural Language Processing and a significant breakthrough in deep learning that solved many problems. In this article, we’ll be looking into what pre-trained word embeddings in NLP are.

Word Embeddings

Word embedding is an approach in Natural language Processing where raw text gets converted to numbers/vectors. As deep learning models only take numerical input this technique becomes important to process the raw data. It helps in capturing the semantic meaning as well as the context of the words. A real-valued vector with various dimensions represents each word.

There are certain methods of generating word embeddings such as BOW (Bag of words), TF-IDF, Glove, BERT embeddings, etc. The earlier methods only converted the words without extracting the semantic relationship and context. But the recent ones such as BERT embeddings, which is a pre-trained word embedding model capture the full context of the word as well as the semantic relationships of the word within the sentence.

Challenges in building word embedding from scratch

Training word embeddings from scratch is possible but it is quite challenging due to large trainable parameters and sparsity of training data. These models need to be trained on a large number of datasets with rich vocabulary and as there are large number of parameters, it makes the training slower. So, it’s quite challenging to train a word embedding model on an individual level.

Pre Trained Word Embeddings

There’s a solution to the above problem, i.e., using pre-trained word embeddings. Pre-trained word embeddings are trained on large datasets and capture the syntactic as well as semantic meaning of the words. This technique is known as transfer learning in which you take a model which is trained on large datasets and use that model on your own similar tasks.

There are two broad classifications of pre trained word embeddings – word-level and character-level. We’ll be looking into two types of word-level embeddings i.e. Word2Vec and GloVe and how they can be used to generate embeddings.

Word2Vec

Word2Vec is one of the most popular pre trained word embeddings developed by Google. It is trained on Good news dataset which is an extensive dataset. As the name suggests, it represents each word with a collection of integers known as a vector. The vectors are calculated such that they show the semantic relation between words.

A popular example of how semantic relation is made is the king queen example:

King - Man + Woman ~ Queen

Word2vec is a feed-forward neural network which consists of two main models – Continuous Bag-of-Words (CBOW) and Skip-gram model. The continuous bag of words model learns the target word from the adjacent words whereas in the skip-gram model, the model learns the adjacent words from the target word. They are completely opposite of each other.

Firstly, the size of context window is defined. Context window is a sliding window which runs through the whole text one word at a time. It basically refers to the number of words appearing on the right and left side of the focus word. eg. if size of the context window is set to 2, then it will include 2 words on the right as well as left of the focus word.

Focus word is our target word for which we want to create the embedding / vector representation. Generally, focus word is the middle word but in the example below we’re taking last word as our target word. The neighbouring words are the words that appear in the context window. These words help in capturing the context of the whole sentence. Let’s understand this with the help of an example.

Suppose we have a sentence – “He poured himself a cup of coffee”. The target word here is “himself”.

Continuous Bag-Of-Words –

input = [“He”, “poured”, “a”, “cup”]

output = [“himself”]

Skip-gram model –

input = [“himself”]

output = [“He”, “poured”, “a”, “cup”]

This can be used to generate high-quality word embeddings. You can learn more about these word representations from [https://arxiv.org/pdf/1301.3781.pdf]

Code

To generate word embeddings using pre trained word word2vec embeddings, first download the model bin file from here. Then import all the necessary libraries needed such as gensim (will be used for initialising the pre trained model from the bin file.

Python

from gensim.models import Word2Vec

from gensim.models import KeyedVectors

pretrained_model_path = 'GoogleNews-vectors-negative300.bin.gz'

word_vectors = KeyedVectors.load_word2vec_format(pretrained_model_path, binary=True)

word1 = "early"

word2 = "seats"

similarity1 = word_vectors.similarity(word1, word2)

print(similarity1)

word3 = "king"

word4 = "man"

similarity2 = word_vectors.similarity(word3, word4)

print(similarity2)

|

Output:

0.035838068

0.2294267

The above code initialises word2vec model using gensim library. It calculates the cosine similarity between words. As you can see the second value is comparatively larger than the first one (these values ranges from -1 to 1), so this means that the words “king” and “man” have more similarity.

We can also find words which are most similar to the given word as parameter

Python3

king = word_vectors.most_similar('King')

print(f'Top 10 most similar words to "King" are : {king}')

|

Output:

Top 10 most similar words to "King" are : [('Jackson', 0.5326348543167114),

('Prince', 0.5306329727172852),

('Tupou_V.', 0.5292826294898987),

('KIng', 0.5227501392364502),

('e_mail_robert.king_@', 0.5173623561859131),

('king', 0.5158917903900146),

('Queen', 0.5157250165939331),

('Geoffrey_Rush_Exit', 0.49920955300331116),

('prosecutor_Dan_Satterberg', 0.49850785732269287),

('NECN_Alison', 0.49128594994544983)]GloVe

Given by Stanford, GloVe stands for Global Vectors for Word Representation. It is a popular word embedding model which works on the basic idea of deriving the relationship between words using statistics. It is a count based model that employs co-occurrence matrix. A co-occurrence matrix tells how often two words are occurring globally. Each value is a count of a pair of words occurring together.

Glove basically deals with the spaces where the distance between words is linked to to their semantic similarity. It has properties of the global matrix factorisation and the local context window technique. Training of the model is based on the global word-word co-occurrence data from a corpse, and the resultant representations results into linear substructure of the vector space

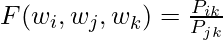

GloVe calculates the co-occurrence probabilities for each word pair. It divides the co-occurrence counts by the total number of co-occurrences for each word:

For example, the co-occurrence probability of “cat” and “mouse” is calculated as: Co-occurrence Probability(“cat”, “mouse”) = Count(“cat” and “mouse”) / Total Co-occurrences(“cat”)

In this case:

Count("cat" and "mouse") = 1

Total Co-occurrences("cat") = 2 (with "chases" and "mouse")

So, Co-occurrence Probability("cat", "mouse") = 1 / 2 = 0.5GloVe Model Building

Firstly, download gloVe 6B embeddings from this site. Then unzip the file and add the file to the same folder as your code. There are many variations of the 6B model but we’ll using the glove.6B.50d.

Python3

from tensorflow.keras.preprocessing.text import Tokenizer

from tensorflow.keras.preprocessing.sequence import pad_sequences

import numpy as np

x = {'processing', 'the', 'world', 'prime',

'natural', 'language'}

tokenizer = Tokenizer()

tokenizer.fit_on_texts(x)

print("Dictionary is = ", tokenizer.word_index)

def embedding_vocab(filepath, word_index,embedding_dim):

vocab_size = len(word_index) + 1

embedding_matrix_vocab = np.zeros((vocab_size,

embedding_dim))

with open(filepath, encoding="utf8") as f:

for line in f:

word, *vector = line.split()

if word in word_index:

idx = word_index[word]

embedding_matrix_vocab[idx] = np.array(

vector, dtype=np.float32)[:embedding_dim]

return embedding_matrix_vocab

embedding_dim = 50

embedding_matrix_vocab = embedding_vocab(

'glove.6B.50d.txt', tokenizer.word_index,

embedding_dim)

print("embedding for first word is => ",embedding_matrix_vocab[1])

|

Output:

Dictionary is =

('the': 1, 'world': 2, 'processing': 3, 'prime': 4, 'language': 5, 'natural': 6}

Dense vector for first word is => [4.180000130-01 2.49679998e-01 -4.12420005e-01 1.216999960-01

3.45270008e-01 -4.44569997e-02 -4.96879995e-01 -1.78619996-01

-6.60229998e-04 -6.56599998e-01 2.78430015e-01 -1.47670001-01

-5.56770027e-01 1.46579996e-01 -9.50950012e-03 1.16579998e-02

1.02040000e-01 -1.27920002e-01 -8.44299972e-01 -1.21809997e-01

-1.68009996e-02 -3.32789987e-01 -1.55200005e-01 -2.31309995e-01

-1.91809997e-01 -1.88230002e+00 -7.67459989e-01 9.90509987e-02

-4.21249986e-01 -1.95260003e-01 4.00710011e+00 1.85939997e-01

-5.22870004e-01 -3.16810012e-01 5.92130003e-04 7.44489999e-03

1.77780002e-01 -1.58969998e-01 1.20409997e-02 -5.42230010e-02

-2.98709989e-01 -1.57490000e-01 -3.47579986e-01 -4.56370004e-02

-4.42510009e-01 1.87849998e-01 2.78489990e-03 -1.84110001e-01

-1.15139998e-01 -7.85809994e-01]

BERT Embeddings

Another important pre trained transformer based model is by Google known as BERT or Bidirectional Encoder Representations from Transformers. It can be used to extract high quality language features from raw text or can be fine-tuned on own data to perform specific tasks.

BERT’s architecture consists of only encoders and input received is a sequence of tokens i.e. Token embeddings, Segment embeddings and Positional embeddings. The main idea is to mask a few words in a sentence and task the model to predict the masked words.

BERT

Firstly, install the transformers library as we’ll be using pytorch and transformers for implementing this.

!pip install transformers

Python

import torch

from transformers import BertTokenizer

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

text = "This blog post explains pre trained word embeddings"

marked_text = "[CLS] " + text + " [SEP]"

tokenized_text = tokenizer.tokenize(marked_text)

indexed_tokens = tokenizer.convert_tokens_to_ids(tokenized_text)

print (tokenized_text)

|

Output:

['[CLS]', 'this', 'blog', 'post', 'explains', 'pre', 'trained', 'word', 'em', '##bed', '##ding', '##s', '[SEP]']

Python

segments_ids = [1] * len(tokenized_text)

tokens_tensor = torch.tensor([indexed_tokens])

segments_tensors = torch.tensor([segments_ids])

model = BertModel.from_pretrained('bert-base-uncased',

output_hidden_states = True,

)

model.eval()

with torch.no_grad():

outputs = model(tokens_tensor, segments_tensors)

hidden_states = outputs[2]

word_embedding = torch.cat([hidden_states[i] for i in [-1,-2,-3,-4]], dim=-1)

print(word_embedding)

|

Output:

tensor([[[ 0.1508, -0.0126, -0.0503, ..., 0.0346, 0.4191, 0.2692],

[-0.2833, -0.4473, -0.1290, ..., 0.1606, 0.5159, 0.2478],

[ 0.6687, -0.4654, 0.3076, ..., 0.2321, -0.0784, -0.7501],

...,

[-0.1049, 0.6510, -0.3414, ..., 0.0136, 0.3559, 0.0941],

[-0.2663, -0.0465, -0.2842, ..., -0.4947, 0.0606, 0.1420],

[ 0.8174, 0.2086, -0.4486, ..., -0.0698, -0.0547, -0.0229]]])

Conclusion

Generating word embedding is a crucial technique to solve natural language problems and pre trained embeddings offer a powerful solution to the complexities associated with generating word embeddings from scratch.

Share your thoughts in the comments

Please Login to comment...