Advantages and Disadvantages of Logistic Regression

Last Updated :

10 Jan, 2023

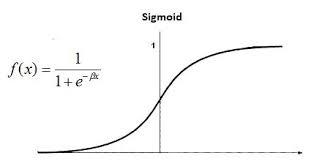

Logistic regression is a classification algorithm used to find the probability of event success and event failure. It is used when the dependent variable is binary(0/1, True/False, Yes/No) in nature. It supports categorizing data into discrete classes by studying the relationship from a given set of labelled data. It learns a linear relationship from the given dataset and then introduces a non-linearity in the form of the Sigmoid function.

Logistic regression is also known as Binomial logistics regression. It is based on sigmoid function where output is probability and input can be from -infinity to +infinity. Let’s discuss some advantages and disadvantages of Linear Regression.

| Advantages

|

Disadvantages

|

| Logistic regression is easier to implement, interpret, and very efficient to train. |

If the number of observations is lesser than the number of features, Logistic Regression should not be used, otherwise, it may lead to overfitting. |

| It makes no assumptions about distributions of classes in feature space. |

It constructs linear boundaries. |

| It can easily extend to multiple classes(multinomial regression) and a natural probabilistic view of class predictions. |

The major limitation of Logistic Regression is the assumption of linearity between the dependent variable and the independent variables. |

| It not only provides a measure of how appropriate a predictor(coefficient size)is, but also its direction of association (positive or negative). |

It can only be used to predict discrete functions. Hence, the dependent variable of Logistic Regression is bound to the discrete number set. |

| It is very fast at classifying unknown records. |

Non-linear problems can’t be solved with logistic regression because it has a linear decision surface. Linearly separable data is rarely found in real-world scenarios. |

| Good accuracy for many simple data sets and it performs well when the dataset is linearly separable. |

Logistic Regression requires average or no multicollinearity between independent variables. |

| It can interpret model coefficients as indicators of feature importance. |

It is tough to obtain complex relationships using logistic regression. More powerful and compact algorithms such as Neural Networks can easily outperform this algorithm. |

| Logistic regression is less inclined to over-fitting but it can overfit in high dimensional datasets.One may consider Regularization (L1 and L2) techniques to avoid over-fittingin these scenarios. |

In Linear Regression independent and dependent variables are related linearly. But Logistic Regression needs that independent variables are linearly related to the log odds (log(p/(1-p)). |

Share your thoughts in the comments

Please Login to comment...