PLY (Python lex-Yacc) – An Introduction

Last Updated :

16 Feb, 2022

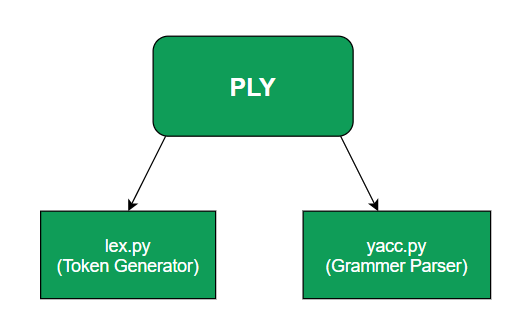

We all have heard of lex which is a tool that generates lexical analyzer which is then used to tokenify input streams and yacc which is a parser generator but there is a python implementation of these two tools in form of separate modules in a package called PLY.

These modules are named lex.py and yacc.py and work similar to the original UNIX tools lex and yacc.

PLY works differently from its UNIX counterparts in a way that it doesn’t require a special input file instead it takes the python program as inputs directly. The traditional tools also make use of parsing tables which are hard on compiler time whereas PLY caches the results generated and saves them for use and regenerates them as needed.

lex.py

This is one of the key modules in this package because the working of yacc.py also depends on lex.py as it is responsible for generating a collection of tokens from the input text and that collection is then identified using the regular expression rules.

To import this module in your python code use import ply.lex as lex

Example:

Suppose you wrote a simple expression: y = a + 2 * b

When this is passed through ply.py, the following tokens are generated

'y','=', 'a', '+', '2', '*', 'b'

These generated tokens are usually used with token names which are always required.

#Token list of above tokens will be

tokens = ('ID','EQUAL','ID', 'PLUS', 'NUMBER', 'TIMES','ID' )

#Regular expression rules for the above example

t_PLUS = r'\+'

t_MINUS = r'-'

t_TIMES = r'\*'

t_DIVIDE = r'/'

More specifically, these can be represented as tuples of token type and token

('ID', 'y'), ('EQUALS', '='), ('ID', 'a'), ('PLUS', '+'),

('NUMBER', '2'), ('TIMES', '*'), ('NUMBER', '3')

This module provides an external interface too in the form of token() which returns the valid tokens from the input.

yacc.py

Another module of this package is yacc.py where yacc stands for Yet Another Compiler Compiler. This can be used to implement one-pass compilers. It provides a lot of features that are already available in UNIX yacc and some extra features that give yacc.py some advantages over traditional yacc

You can use the following to import yacc into your python code import ply.yacc as yacc.

These features include:

- LALR(1) parsing

- Grammar Validation

- Support for empty productions

- Extensive error checking capability

- Ambiguity Resolution

The explicit token generation token() is also used by yacc.py which continuously calls this on user demand to collect tokens and grammar rules. yacc.py spits out Abstract Syntax Tree (AST) as output.

Advantage over UNIX yacc:

Python implementation yacc.py doesn’t involve code-generation process instead it uses reflection to make its lexers and parsers which saves space as it doesn’t require any extra compiler constructions step and code file generation.

For importing the tokens from your lex file use from lex_file_name_here import tokens where tokens are the list of tokens specified in the lex file.

To specify the grammar rules we have to define functions in our yacc file. The syntax for the same is as follows:

def function_name_here(symbol):

expression = expression token_name term

References:

https://www.dabeaz.com/ply/ply.html

Share your thoughts in the comments

Please Login to comment...