Mutual Exclusion in Synchronization

Last Updated :

21 Sep, 2023

During concurrent execution of processes, processes need to enter the critical section (or the section of the program shared across processes) at times for execution. It might happen that because of the execution of multiple processes at once, the values stored in the critical section become inconsistent. In other words, the values depend on the sequence of execution of instructions – also known as a race condition. The primary task of process synchronization is to get rid of race conditions while executing the critical section.

This is primarily achieved through mutual exclusion.

Mutual Exclusion

Mutual Exclusion is a property of process synchronization that states that “no two processes can exist in the critical section at any given point of time”. The term was first coined by Dijkstra. Any process synchronization technique being used must satisfy the property of mutual exclusion, without which it would not be possible to get rid of a race condition.

The need for mutual exclusion comes with concurrency. There are several kinds of concurrent execution:

- Interrupt handlers

- Interleaved, preemptively scheduled processes/threads

- Multiprocessor clusters, with shared memory

- Distributed systems

Mutual exclusion methods are used in concurrent programming to avoid the simultaneous use of a common resource, such as a global variable, by pieces of computer code called critical sections •

The requirement of mutual exclusion is that when process P1 is accessing a shared resource R1, another process should not be able to access resource R1 until process P1 has finished its operation with resource R1.

Examples of such resources include files, I/O devices such as printers, and shared data structures.

Conditions Required for Mutual Exclusion

According to the following four criteria, mutual exclusion is applicable:

- When using shared resources, it is important to ensure mutual exclusion between various processes. There cannot be two processes running simultaneously in either of their critical sections.

- It is not advisable to make assumptions about the relative speeds of the unstable processes.

- For access to the critical section, a process that is outside of it must not obstruct another process.

- Its critical section must be accessible by multiple processes in a finite amount of time; multiple processes should never be kept waiting in an infinite loop.

Approaches To Implementing Mutual Exclusion

1. Software method: Leave the responsibility to the processes themselves. These methods are usually highly error-prone and carry high overheads.

2. Hardware method: Special-purpose machine instructions are used for accessing shared resources. This method is faster but cannot provide a complete solution. Hardware solutions cannot give guarantee the absence of deadlock and starvation.

3. Programming language method: Provide support through the operating system or through the programming language.

Requirements of Mutual Exclusion

- At any time, only one process is allowed to enter its critical section.

- The solution is implemented purely in software on a machine.

- A process remains inside its critical section for a bounded time only.

- No assumption can be made about the relative speeds of asynchronous concurrent processes.

- A process cannot prevent any other process from entering into a critical section.

- A process must not be indefinitely postponed from entering its critical section.

In order to understand mutual exclusion, let’s take an example.

What is a Need for Mutual Exclusion?

An easy way to visualize the significance of mutual exclusion is to imagine a linked list of several items, with the fourth and fifth items needing to be removed. By changing the previous node’s next reference to point to the succeeding node, the node that lies between the other two nodes is deleted.

To put it simply, whenever node “i” wants to be removed, node “with – 1″‘s subsequent reference is changed to point to node “ith + 1” at that time. Two distinct nodes can be removed by two threads at the same time when a shared linked list is being used by many threads. This occurs when the first thread modifies node “ith – 1” next reference, pointing towards the node “ith + 1,” and the second thread modifies node “ith” next reference, pointing towards the node “ith + 2.” Although both nodes have been removed, the linked list’s required state has not yet been reached because node “i + 1” still exists in the list because node “ith – 1” next reference still points to it.

Now, this situation is called a race condition. Race conditions can be prevented by mutual exclusion so that updates at the same time cannot happen to the very bit about the list.

Example:

In the clothes section of a supermarket, two people are shopping for clothes.

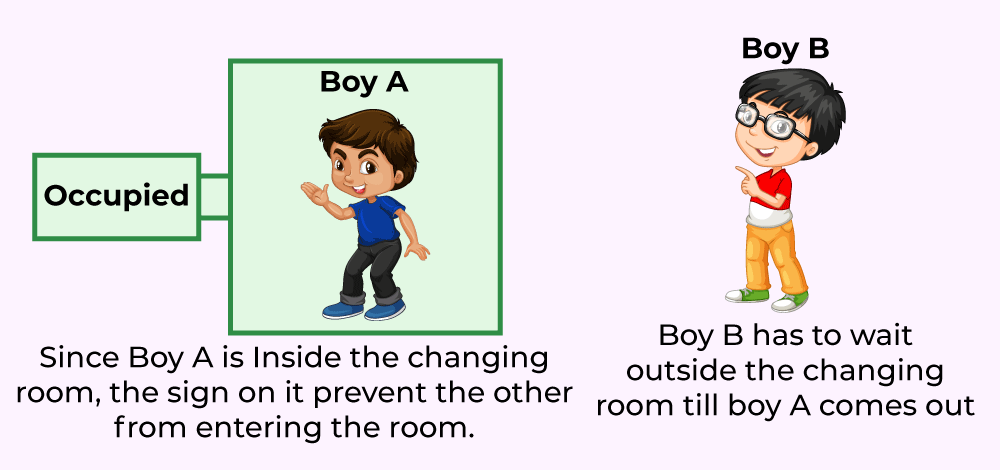

Boy, A decides upon some clothes to buy and heads to the changing room to try them out. Now, while boy A is inside the changing room, there is an ‘occupied’ sign on it – indicating that no one else can come in. Girl B has to use the changing room too, so she has to wait till boy A is done using the changing room.

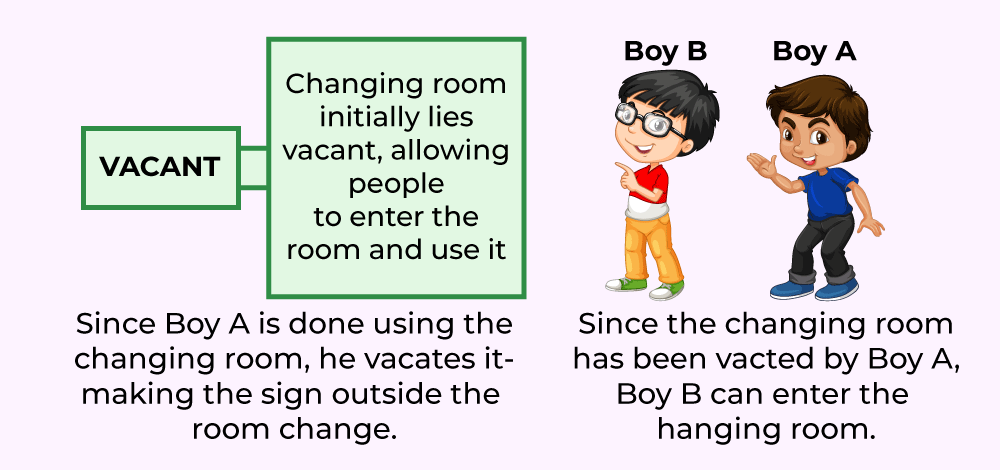

Once boy A comes out of the changing room, the sign on it changes from ‘occupied’ to ‘vacant’ – indicating that another person can use it. Hence, girl B proceeds to use the changing room, while the sign displays ‘occupied’ again.

The changing room is nothing but the critical section, boy A and girl B are two different processes, while the sign outside the changing room indicates the process synchronization mechanism being used.

Frequently Asked Questions

Q.1: What is a race condition?

Answer:

A race condition occurs when multiple processes or threads access shared data concurrently, and the final outcome of the program depends on the relative timing of their execution. This can lead to unexpected and undesired results, such as data corruption, incorrect calculations, or program crashes.

Q.2: How can race conditions be avoided?

Answer:

Race conditions can be avoided by implementing mutual exclusion techniques, such as locks, semaphores, or monitors. These techniques ensure that only one process or thread can access a shared resource at a time, preventing simultaneous conflicting accesses and maintaining data integrity.

Q.3: What is a deadlock?

Answer:

A deadlock is a situation in concurrent programming where two or more processes or threads are unable to proceed because each is waiting for the other to release a resource. As a result, the processes or threads are stuck in a state of waiting indefinitely, leading to a system freeze or unresponsiveness.

Share your thoughts in the comments

Please Login to comment...