Image GPT

Last Updated :

26 Nov, 2020

Image GPT was proposed by researchers at OpenAI in 2019. This paper experiments with applying GPT like transformation in the object recognition/ object detection tasks. However, there are some challenges faced by authors like processing large image sizes etc.

Architecture:

The architecture of Image GPT (iGPT) is similar to GPT-2 i.e. it is made up of a transformer decoder block. The transformer decoder takes an input sequence x1, …, xn of discrete tokens, and outputs a d-dimensional embedding for each position. The transformer can be considered as a stack of decoders of size L, the l-th of which produces an embedding of h1l ….hnl. After that, the input tensor is passed to different layers as follows:

- nl = layer_norm( hl )

- al = hl + multi-head attention(nl)

- hl+1 = al + mlp(layer norm(al))

Where layer_norm is layer normalization and MLP layer is a multi-layer perceptron (artificial neural network) model. Below is the list of different versions

| Model Name/Variant | Input Resolution | params (M) | Features |

|---|

| iGPT-Large(L) | 32*32*3 | 1362 | 1536 |

| 48*48*3 |

| iGPT-XL | 64*64*3 | 6801 | 3072 |

| 15360 |

Context Reduction:

Because the memory requirements of the transformer decoder scale quadratically with context length when using dense attention. This means it requires a lot of computation to train even a single layer transformer. To deal with this, the authors resize the image to lower resolutions called Input Resolutions (IRs). The iGPT model uses IRs of 32*32*3, 48*48*3, and 64*64*3.

Training Methodology:

The model training of Image GPT consists of two steps:

Pre-training

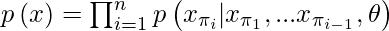

- Given an unlabeled dataset X consisting of high dimensional data x = (x1, …, xn), we can pick a permutation π of the set [1, n] and model the density p(x) auto-regressively as follows:

- For images, we pick the identity permutation πi = i for 1 ≤ i ≤ n, also known as raster order. The model is trained to minimize the negative log-likelihood:

![Rendered by QuickLaTeX.com L_{AR} = \mathbb{E}_{x \sim X} \left [ -log\left ( p(x) \right )\right ]](https://www.geeksforgeeks.org/wp-content/ql-cache/quicklatex.com-20d04adca1c6032b08205c45bd5dcbaa_l3.png)

- The authors also used the loss function similar to masked language modeling in BERT, which samples a sub-sequence M ⊂ [1, n] such that each index i independently has probability 0.15 of appearing in M.

![Rendered by QuickLaTeX.com L_{BERT} = \mathbb{E}_{x \sim X} \mathbb{E}_{M} \left [ -log\left ( p(x_i | x_{[1,n]\backslash M}) \right )\right ]](https://www.geeksforgeeks.org/wp-content/ql-cache/quicklatex.com-cd195db2c0eab5c6c4d788983397b74b_l3.png)

- During pre-training, we pick one of LAR or LBERT and minimize the loss over our pre-training dataset.

Fine-tuning:

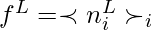

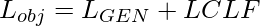

- For fine-tuning, the authors performed average pool n L across the sequence dimension to extract a d-dimensional vector of features per example and learn a projection from the average pool layer. The authors used this projection to minimize cross-entropy loss LCLF. That makes the total objective function

- Where LGEN Is either LAR or LBERT.

The authors also experimented with linear probing which is similar to fine-tuning but without any average pooling layer.

Results:

- On CIFAR-10, iGPT-L achieves 99.0% accuracy and on CIFAR-100, it achieves 88.5% accuracy after fine-tuning. The iGPT-L outperform AutoAugment, the best-supervised model on these datasets.

- On ImageNet, iGPT achieve 66.3% accuracy after fine-tuning at MR (Input Resolution/ Memory Resolution) 32*32, an improvement of 6% over linear probing. When fine-tuning at MR 48*48, the model achieved 72.6% accuracy, with a similar 7% improvement over linear probing.

References:

Share your thoughts in the comments

Please Login to comment...