Vectorization Of Gradient Descent

Last Updated :

24 Oct, 2020

In Machine Learning, Regression problems can be solved in the following ways:

1. Using Optimization Algorithms – Gradient Descent

- Batch Gradient Descent.

- Stochastic Gradient Descent.

- Mini-Batch Gradient Descent

- Other Advanced Optimization Algorithms like ( Conjugate Descent … )

2. Using the Normal Equation :

- Using the concept of Linear Algebra.

Let’s consider the case for Batch Gradient Descent for Univariate Linear Regression Problem.

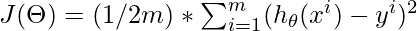

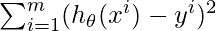

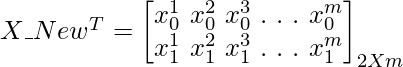

The cost function for this Regression Problem is :

Goal:

In order to solve this problem, we can either go for a Vectorized approach ( Using the concept of Linear Algebra ) or unvectorized approach (Using for-loop).

1. Unvectorized Approach:

Here in order to solve the below mentioned mathematical expressions, We use for loop.

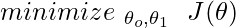

The above mathematical expression is a part of Cost Function.

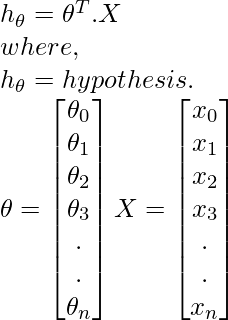

The above Mathematical Expression is the hypothesis.

Code: Python Implementation of Unvectorzed Grad

Code: Python Implementation of Unvectorzed Grad

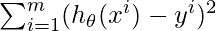

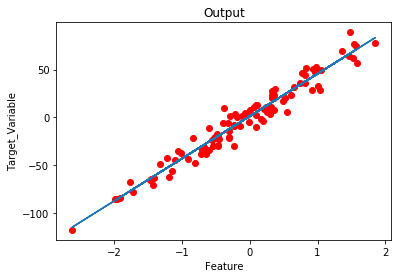

from sklearn.datasets import make_regression

import matplotlib.pyplot as plt

import numpy as np

import time

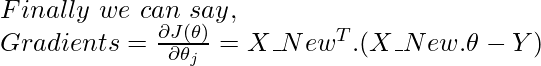

x, y = make_regression(n_samples = 100, n_features = 1,

n_informative = 1, noise = 10, random_state = 42)

plt.scatter(x, y, c = 'red')

plt.xlabel('Feature')

plt.ylabel('Target_Variable')

plt.title('Training Data')

plt.show()

y = y.reshape(100, 1)

num_iter = 1000

alpha = 0.01

m = len(x)

theta = np.zeros((2, 1),dtype = float)

t0 = t1 = 0

Grad0 = Grad1 = 0

start_time = time.time()

for i in range(num_iter):

for j in range(m):

Grad0 = Grad0 + (theta[0] + theta[1] * x[j]) - (y[j])

for k in range(m):

Grad1 = Grad1 + ((theta[0] + theta[1] * x[k]) - (y[k])) * x[k]

t0 = theta[0] - (alpha * (1/m) * Grad0)

t1 = theta[1] - (alpha * (1/m) * Grad1)

theta[0] = t0

theta[1] = t1

Grad0 = Grad1 = 0

print('model parameters:',theta,sep = '\n')

print('Time Taken For Gradient Descent in Sec:',time.time()- start_time)

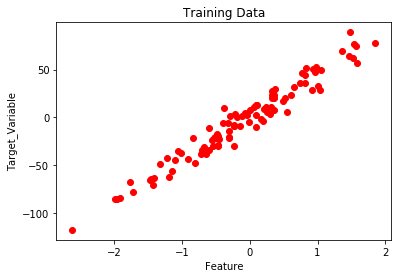

h = []

for i in range(m):

h.append(theta[0] + theta[1] * x[i])

plt.plot(x,h)

plt.scatter(x,y,c = 'red')

plt.xlabel('Feature')

plt.ylabel('Target_Variable')

plt.title('Output')

|

Output:

model parameters:

[[ 1.15857049]

[44.42210912]]

Time Taken For Gradient Descent in Sec: 2.482538938522339

2. Vectorized Approach:

Here in order to solve the below mentioned mathematical expressions, We use Matrix and Vectors (Linear Algebra).

The above mathematical expression is a part of Cost Function.

The above Mathematical Expression is the hypothesis.

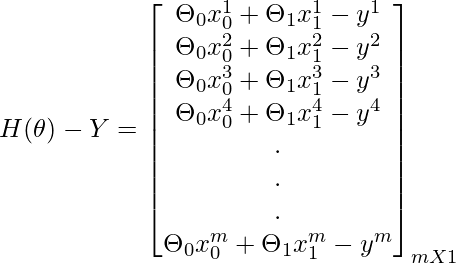

Batch Gradient Descent :

Concept To Find Gradients Using Matrix Operations:

Code: Python implementation of vectorized Gradient Descent approach

Code: Python implementation of vectorized Gradient Descent approach

from sklearn.datasets import make_regression

import matplotlib.pyplot as plt

import numpy as np

import time

x, y = make_regression(n_samples = 100, n_features = 1,

n_informative = 1, noise = 10, random_state = 42)

plt.scatter(x, y, c = 'red')

plt.xlabel('Feature')

plt.ylabel('Target_Variable')

plt.title('Training Data')

plt.show()

X_New = np.array([np.ones(len(x)), x.flatten()]).T

y = y.reshape(100, 1)

num_iter = 1000

alpha = 0.01

m = len(x)

theta = np.zeros((2, 1),dtype = float)

start_time = time.time()

for i in range(num_iter):

gradients = X_New.T.dot(X_New.dot(theta)- y)

theta = theta - (1/m) * alpha * gradients

print('model parameters:',theta,sep = '\n')

print('Time Taken For Gradient Descent in Sec:',time.time() - start_time)

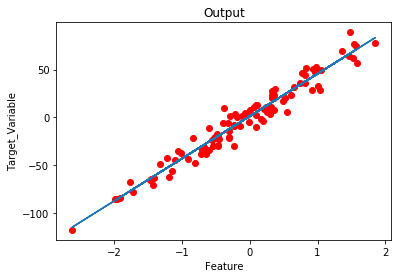

h = X_New.dot(theta)

plt.scatter(x, y, c = 'red')

plt.plot(x ,h)

plt.xlabel('Feature')

plt.ylabel('Target_Variable')

plt.title('Output')

|

Output:

model parameters:

[[ 1.15857049]

[44.42210912]]

Time Taken For Gradient Descent in Sec: 0.019551515579223633

Observations:

- Implementing a vectorized approach decreases the time taken for execution of Gradient Descent( Efficient Code ).

- Easy to debug.

Share your thoughts in the comments

Please Login to comment...