Sorting larger file with smaller RAM

Last Updated :

16 Oct, 2019

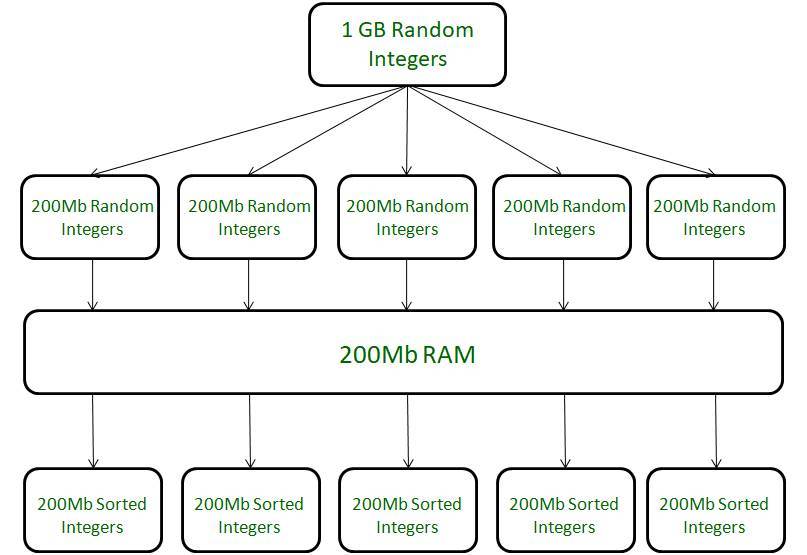

Suppose we have to sort a 1GB file of random integers and the available ram size is 200 Mb, how will it be done?

The easiest way to do this is to use external sorting.

We divide our source file into temporary files of size equal to the size of the RAM and first sort these files.

Assume 1GB = 1024MB, so we follow following steps.

- Divide the source file into 5 small temporary files each of size 200MB (i.e., equal to the size of ram).

- Sort these temporary files one bye one using the ram individually (Any sorting algorithm : quick sort, merge sort).

Now we have small sorted temporary files as shown in the image below.

Figure – Dividing source file in smaller sorted temp files

Now we have sorted temporary files.

- Pointers are initialized in each file

- A new file of size 1GB (size of source file) is created.

- First element is compared from each file with the pointer.

- Smallest element is copied into the new 1GB file and pointer gets incremented in the file which pointed to this smallest element.

- Same process is followed till all pointers have traversed their respective files.

- When all the pointers have traversed, we have a new file which has 1GB of sorted integers.

This is how any larger file can be sorted when there is a limitation on the size of primary memory (RAM).

The basic idea is to divide the larger file into smaller temporary files, sort the temporary files and then creating a new file using these temporary files. This question was asked in Infosys interview for power programmer profile.

Share your thoughts in the comments

Please Login to comment...