Project Idea | Motion detection using Background Subtraction Techniques

Last Updated :

25 Nov, 2022

Foreground detection based on video streams is the first step in computer vision applications, including real-time tracking and event analysis. Many researchers in the field of image and video semantics analysis pay attention to

intelligent video surveillance in residential areas, junctions, shopping malls, subways, and airports which are closely associated with foreground detection.

Background modeling is an efficient way to obtain foreground objects. Though background modeling methods for foreground detection have been studied for several decades, each method has its own strength and weakness in detecting objects of interest from video streams.

Some of them are very significant for BS, and not usual in the other benchmarks. I have proposed a comparison of BGS methods namely (Adaptive BG Learning, ZivkovicGMM, Fuzzy Integral), with various methodologies.

Features:

- Can eliminate noise in the sequence of frames effectively using suitable BGS methods.

- Can efficiently detect foreground provided alpha and threshold is fixed.

- Motions in different challenges can be detected by subtracting issues like dynamic background etc.

BG Modeling Steps:

- Background initialization: The first aim to build a background model is to fix number of frames. This model can be designed by various ways (Gaussian, fuzzy etc.).

- Foreground detection: In the next frames, a comparison is processed between the current frame and the background model. This subtraction leads to the computation of the foreground of the scene.

- Background maintenance: During this detection process, images are also analyzed in order to update the background model learned at the initialization step, with respect to a learning rate. An object not moving during long time should be integrated in the background for example.

BG Subtraction Methods step by step

- Adaptive BG Learning: In a simple way, this can be done by setting manually a static image that represents the background, and having no moving object

- For each video frame, compute the absolute difference between the current frame and the static image.

- If absolute difference exceeds threshold, frame is regarded as background, otherwise foreground.

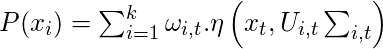

- Gaussian mixture model (GMM): In order to model a background which is dynamic texture(such as waves on the water or trees shaken by the wind), each pixel with a mixture of K Gaussian distribution is modeled.

- For each video frames, find the probability of input pixel value x from current frame at time t being a background pixel is represented by the following mixture of Gaussian

- A new pixel is checked against the exiting K Gaussian distributions, until a match is found.

- If none of K distributions match the current pixel value, the least probable distribution is replaced

with a distribution with the current value as its mean value.

- If pixel values cannot match the background model distributions, they will be labeled “in motion”, otherwise background pixel.

- Fuzzy Integral:: The background initialization is made by using the average of the N first video frames where objects are present. An update rule of the background image is necessary to adapt well the system over time to some environmental changes. For this, a selective maintenance scheme is adopted as follows:

The fuzzy integrals aggregates nonlinearly the outcomes of all criteria.

- The pixel at position(x, y)is considered as foreground if its Choquet integral value is less than a certain constant threshold which means that pixels at the same position in the background and the current images are not similar.

- This a constant value depending on each video data set.

Software and hardware required:

- Library: OpenCV

- Language: C++

- Environment: Visual Studio

- Hardware: 2.67 GHz Core i5 4 GB RAM

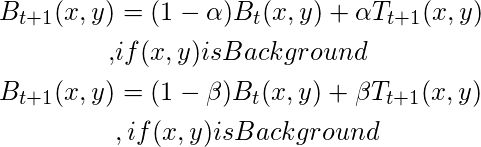

Diagram

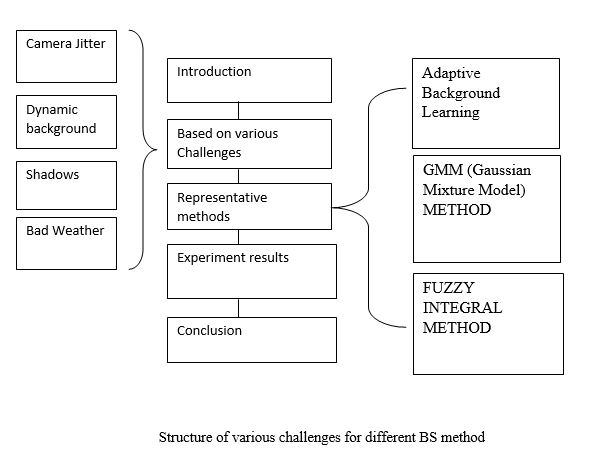

This shows that how our foreground detection methods are performed in different challenges.

Result

Conclusion

In this work, we have proposed several methods of background subtraction which is required for foreground detection.

These are necessary for several technologies used in computer vision.

Though each tested method has different advantages and disadvantages, we found that AGMM and Fuzzy Integral are the most promising methods.

These will be helpful in understanding and developing new algorithms for foreground detection with parameters taking into account.

Project Link

https://saanjk@bitbucket.org/saanjk/bgs-methods-for-motion-detection.git

Video

Research

Detecting foreground objects in motion is a challenging task owing to their variable appearance.

This is a booming research topic which is still going on for surveillance of large crowds

in real time applications.

Research areas include image processing, artificial Intelligence and machine learning.

- https://hal.archives-ouvertes.fr/hal-00333086/document

- http://ieeexplore.ieee.org/document/7565562/

- http://vc.cs.nthu.edu.tw/home/paper/codfiles/whtung/200603141526/Improved_Adaptive_Gaussian_Mixture_Model_for_Background.PDF

Application:

Video Surveillance System including residential areas, junctions, shopping malls, subways, and airports.

This article is contributed by

Afzal Ansari and co-guided by

Prof. Subrata Mohanty.

Share your thoughts in the comments

Please Login to comment...