Google’s Search Autocomplete High-Level Design(HLD)

Last Updated :

15 Apr, 2024

Google Search Autocomplete is a feature that predicts and suggests search queries as users type into the search bar. As users begin typing a query, Google’s autocomplete algorithm generates a dropdown menu with suggested completions based on popular searches, user history, and other relevant factors.

- In this article, we’ll discuss the high-level design of Google’s Search Autocomplete feature. This functionality predicts and suggests search queries as users type, enhancing the search experience.

- We’ll explore the architecture, components, and challenges involved in building a scalable and efficient autocomplete system. Understanding Google’s approach can provide valuable insights for developers and engineers working on similar systems.

Important Topics for Google’s Search Autocomplete High-Level Design

- Instant Match Ideas: As you type, the auto-fill should instantly show matching ideas. This makes the experience smooth and fast.

- Accurate and Fitting: The suggested ideas should be precise and make sense for what you’ve typed so far. Smart math does this by figuring out what you might want.

- Customized Guesses: The auto-fill should use info like your location, past searches, and popular topics. This way, its guesses fit you specifically.

- Data Handling Made Easy: Google needs to store and access many user searches and suggestions quickly. It should have great ways to save and find this data fast.

- Speed Matters: The autocomplete tool must work super fast. When you start typing, suggestions should pop up right away, even if you’re far from Google’s home base.

- You Can Count On It: Autocomplete needs to be reliable. It should always work properly so you can get accurate suggestions without interruptions or downtime.

- Many Users, No Problem: Lots of people use Google at once. The system must handle many users smoothly, keeping everything running smoothly during busy times.

- Global Scale: The autocomplete system should give speedy and fitting answers worldwide. It should work well for people from different places and languages. But it must act the same way and be right all the time.

- Security and Privacy: The system must keep user details and privacy safe. It should handle search queries and suggestions securely. And it must follow rules and privacy policies.

- Adaptability and Evolution: The system should change as user habits, search trends, and tech move forward. Updates and improvements will make it better for users. This helps the system stay ahead in the search engine market.

Capacity Estimation for Google’s Search Autocomplete

Traffic Estimations for Google’s Search Autocomplete

- User Traffic (UT): This is the total number of searches Google receives per day globally. Let’s assume this to be 3 billion searches per day.

- Queries per User (QPU): This represents the average number of searches performed by a user in a single session. Let’s assume a user performs 3 searches in one session.

- Average Session Duration (ASD): This is the average time a user spends in a single search session. Let’s assume this to be 5 minutes.

- Queries per Second (QPS): This is the average number of searches Google receives per second. It’s calculated based on the total number of searches per day, divided by the number of seconds in a day. QPS=(User Traffic×Queries per User)/Seconds in a Day

Let’s calculate QPS using the provided assumptions:

UT=3×10^9 searches/day

QPU=3 searches/session

ASD=5 minutes=5/60 hours

Seconds in a Day=24×60×60=86,400 seconds

Plugging in these values:

QPS=3×109×386,400QPS=86,4003×109×3

QPS≈104,167 queries/second

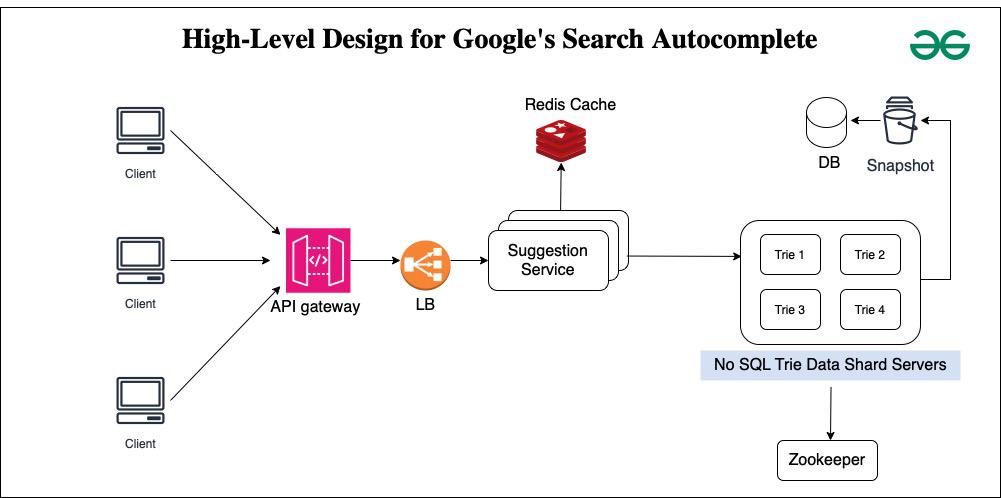

1. Clients:

- These are the end-users or applications that interact with the autocomplete system to get search suggestions.

- Clients send search queries to the API Gateway for processing and receive autocomplete suggestions in response.

- The API Gateway acts as an entry point for clients to access the autocomplete system.

- It receives incoming requests from clients, routes them to the appropriate backend services, and handles authentication, authorization, and rate limiting.

- In contrast to Google Search Autocomplete, the API Gateway in your system design abstracts the internal components and provides a unified interface for clients to interact with the system.

- Load balancers distribute incoming client requests across multiple instances of the suggestion service to ensure scalability, fault tolerance, and optimal resource utilization.

- They monitor the health of backend servers and route traffic accordingly.

- In the context of Google Search Autocomplete, load balancers ensure that requests are evenly distributed among suggestion service instances to handle varying loads efficiently.

4. Suggestion Service:

- The suggestion service is the core component responsible for generating autocomplete suggestions based on incoming search queries.

- It processes queries, retrieves relevant suggestions from the data store (Redis cache or NoSQL Trie data servers), and returns them to the client via the API Gateway.

- This service may incorporate various algorithms and data structures to efficiently retrieve and rank suggestions.

- In contrast to Google Search Autocomplete, the suggestion service in the design is responsible for both fetching suggestions and serving them to clients.

- Redis cache is an in-memory data store used to cache frequently accessed search queries and their corresponding autocomplete suggestions.

- It helps reduce latency by storing precomputed results and serving them quickly to clients without hitting the backend data store.

- Redis cache improves performance by caching frequently requested suggestions, reducing the load on the backend suggestion service and improving overall system responsiveness.

6. NoSQL Trie Data Servers:

- NoSQL Trie data servers store the trie data structure used for efficient prefix matching and search.

- They maintain a distributed, scalable database of search queries organized in a trie format, allowing for fast lookup of autocomplete suggestions.

- These servers store the raw trie data structure, enabling efficient retrieval of suggestions without needing to compute them on the fly.

7. Snapshots Database:

- The snapshots database stores periodic snapshots or backups of the system’s data for disaster recovery, backup, and archival purposes.

- It ensures data integrity and provides a fallback mechanism in case of data loss or corruption.

- In contrast to Google Search Autocomplete, the snapshots database in your system design ensures data durability and availability, enabling the system to recover from failures and maintain consistent data across different components.

- Zookeeper is a centralized service for maintaining configuration information, providing distributed synchronization, and facilitating coordination among distributed systems.

- It helps manage distributed resources, elect leaders, and maintain consensus in a distributed environment.

- In contrast to Google Search Autocomplete, Zookeeper ensures the coordination and consistency of distributed components in your system design, such as load balancers, suggestion services, and data servers, enabling them to work together seamlessly and maintain system integrity

Scalability for Google’s Search Autocomplete

More people using the system means more traffic. To handle the extra load, the system can add more servers. These servers help spread out the traffic. Load balancers make sure the traffic is shared evenly across all servers. The system also stores data that people ask for often. Storing this data means the servers don’t have to get it from storage every time. Separate databases and microservices also let the system easily grow as more people use it.

Scalability in Google’s search autocomplete is achieved through:

- Horizontal Scaling: More servers share the traffic load across them. Simply put, adding extra computers to deal with a lot of people using your website or app.

- Load Balancers: Even distribution of online visitors, so no server gets overloaded, is done via ‘load balancers’ — clever systems managing traffic flow.

- Caching: Frequently used data gets stored temporarily, called ‘caching’. Reduces database workload, makes your experience faster.

- Distributed Databases and Microservices: Breaking down an application into mini-services handling specific tasks is called ‘microservices’. Databases too become distributed for efficient scaling.

- Asynchronous Processing and Message Queues: Time-taking jobs get pushed to separate ‘queues’. While you wait, the main system stays responsive, not hanging or crashing.

- Auto-scaling: Resources like servers automatically increase or decrease based on real-time usage demands through ‘auto-scaling’ — optimizing both performance and costs.

Share your thoughts in the comments

Please Login to comment...