Getting Started with Google Actions

Last Updated :

03 Jan, 2023

Actions on Google is the developer platform for the Google Assistant. By creating actions, brands are able to bring their services to the Google Assistant. Some important terms related to Actions on Google:

Actions

An interaction with the to start a conversation, the user needs to invoke your Action through the Assistant. Users say or type a phrase like “Hey Google, talk to Google IO”. This tells the Assistant the name of the Action to talk to. Actions on Google is the developer platform for the Google Assistant. By creating actions, brands are able to bring their services to the Google Assistant on Google Home. Actions are similar to skills for Amazon Alexa but different in a couple of important ways.

The key difference between Actions on Google and Amazon’s Alexa skills is that users do not have to enable an action before it is usable or even discoverable to the user. Instead, you can access any action at any time. Enabling a skill is an Alexa equivalent to downloading an app. Actions are always available to everyone. No extra steps.

Dialogflow

Dialogflow is a natural language understanding platform used to design and integrate a conversational user interface into mobile apps, web applications, devices, bots, interactive voice response systems, and so on. Dialogflow translates end-user text or audio during a conversation to structured data that your apps and services can understand.

It gives users new ways to interact with your product by building engaging voice and text-based conversational interfaces, such as voice apps and chatbots, powered by AI. Connect with users on your website, mobile app, the Google Assistant, Amazon Alexa, Facebook Messenger, and other popular platforms and devices. You can use it to build interfaces (such as chatbots and conversational IVR) that enable natural and rich interactions between your users and your business. Dialogflow Enterprise Edition users have access to Google Cloud Support and a service level agreement (SLA) for production deployments.

Intent

Intents are messaging objects that describe how to do something. You can use intents in one of the two ways:

- By providing the fulfillment for an intent.

- By requesting the fulfillment of an intent by the Google Assistant.

Intents contains training phrases which user might say to our action while interacting with it. So we can have multiple intents depending upon the functionality of our Action. Whatever user says is matched with Intents which we created in our solution and if a match is found then appropriate message/action is performed as defined. There are two types of intents:

- Default Intents

- Custom Intents

So whenever you create an Agent, there we will notice two default intents created which you can modify as per your requirement.

Defaults Intents are :

- Default Welcome Intent: Contains a default welcome message for the user’s using your actions, which you can customize and also added more utterances as training phrases in the intent.

- Default Fall back Intent: Contains default fallback messages which will be displayed to users once our action is not able to find suitable intent that matches user queries/utterances.

Custom Intents: Intents that we create to match user queries and show results (showing static messages or showing dynamic data via database/API) by performing tasks in the background if required.

Entity

Entities are parts of the user’s input that describes some useful information that Dialogflow can extract and perform an action with. For example, if the user inputs “I want to find someone to help with my car” the intent would be to Find a person and the entity for subject matter would be picked up as a car. It represents a phrase in the text that is a known entity, such as a person, an organization, or a location. The API associates information, such as salience and mentions, with entities.

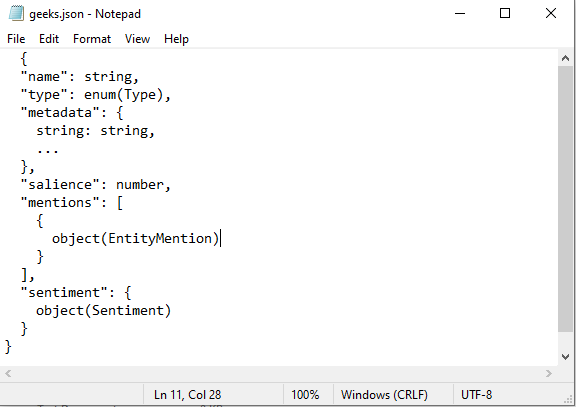

The sample JSON File:

Fields

- name: It is the string representation of the entity name.

- type: It represents the entity type.

- metadata: It is the Metadata associated with the entity.

- salience: The salience score associated with the entity in the [0, 1.0] range.

- mentions[]: The mentions of this entity in the input document. The API currently supports proper noun mentions.

- sentiment: It represents the sentiment object.

This was a very brief introduction to AOG. I hope this is being useful to you. I will be publishing series of Articles on AOG in upcoming days.

Share your thoughts in the comments

Please Login to comment...