Creating Files in HDFS using Python Snakebite

Last Updated :

14 Oct, 2020

Hadoop is a popular big data framework written in Java. But it is not necessary to use Java for working on Hadoop. Some other programming languages like Python, C++ can also be used. We can write C++ code for Hadoop using pipes API or Hadoop pipes. Hadoop pipes enable task-tracker with the help of sockets.

Python can also be used to write code for Hadoop. Snakebite is one of the popular libraries that is used for establishing communication with the HDFS. Using the python client library provided by the Snakebite package we can easily write python code that works on HDFS. It uses protobuf messages to communicate directly with the NameNode. The python client library directly works with HDFS without making a system call to hdfs dfs.

Prerequisite: Snakebite library should be installed.

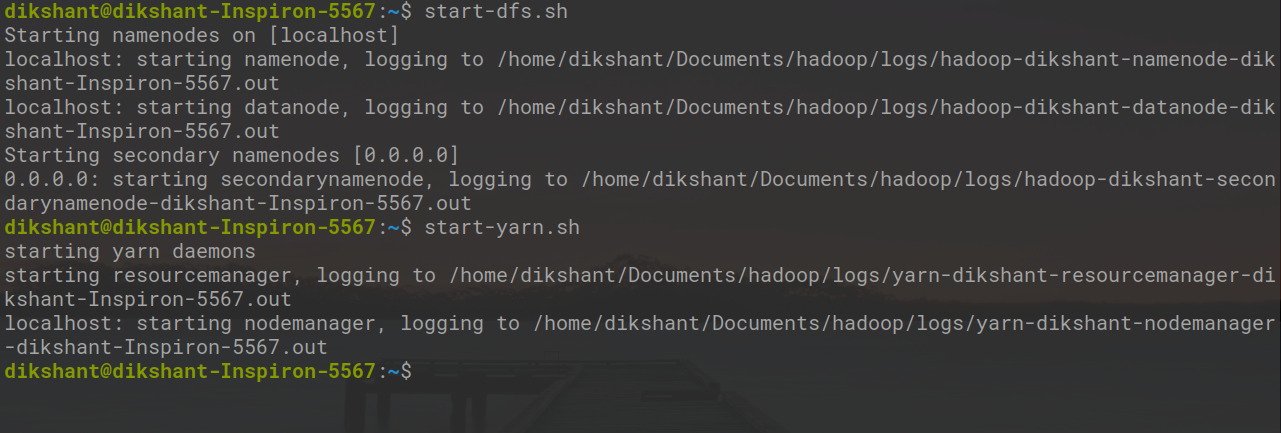

Make sure Hadoop is running if not then start all the daemons with the below command.

start-dfs.sh // start your namenode datanode and secondary namenode

start-yarn.sh // start resourcemanager and nodemanager

Task: Create directories in HDFS using snakebite package using mkdir() method.

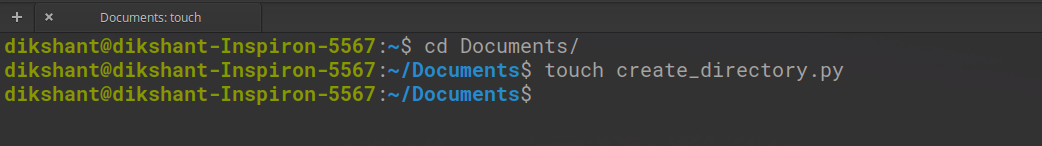

Step 1: Create a file in your local directory with the name create_directory.py at the desired location.

cd Documents/ # Changing directory to Documents(You can choose as per your requirement)

touch create_directory.py # touch command is used to create file in linux enviournment.

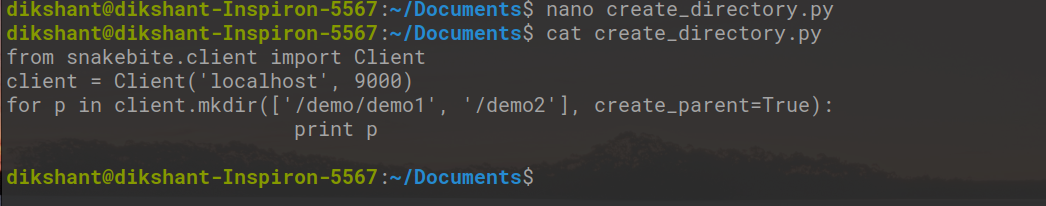

Step 2: Write the below code in the create_directory.py python file.

Python

from snakebite.client import Client

client = Client('localhost', 9000)

for p in client.mkdir(['/demo/demo1', '/demo2'], create_parent=True):

print p

|

The mkdir() takes a list of the path of directories we want to make. create_parent=True ensures that if the parent directory is not created it should be created first. In our case, the demo directory will create first, and then demo1 will be created inside it.

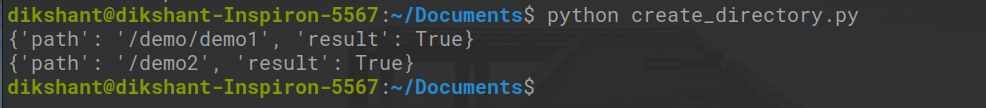

Step 3: Run the create_directory.py file and observe the result.

python create_directory.py // this will create directory's as mentioned in mkdir() argument.

In the above image ‘result’ :True states that we have successfully created the directory.

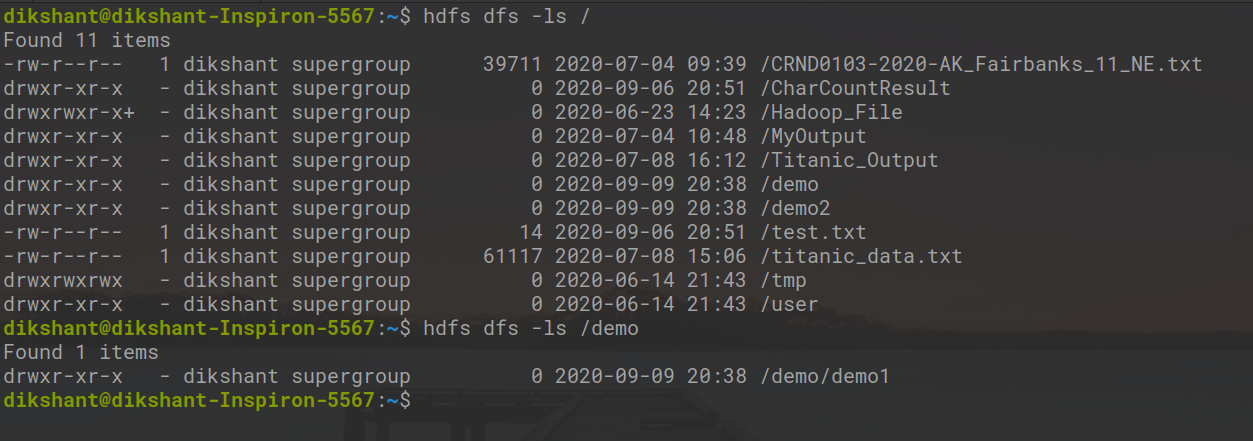

Step 4: We can check the directories are created or not either visiting manually or with the below command.

hdfs dfs -ls / // list all the directory's in root folder

hdfs dfs -ls /demo // list all the directory's present in demo folder

In the above image, we can observe that we have successfully created all the directories.

Share your thoughts in the comments

Please Login to comment...