How does Volatile qualifier of C works in Computing System

Last Updated :

28 Jul, 2020

Prerequisite: Computing systems, Processing unit

Processing Unit:

- Processing units also have some small memory called registers.

- The interface between the processor (processing unit) and memory should work on the same speed for better performance of the system.

Memory:

In memory, there are two types, SRAM and DRAM. SRAM is costly but fast and DRAM is cheap but slow. Initial days the SRAM was used as memory. When the memory size started to increase the DRAM usage was increased and today only DRAM is used as the main memory.

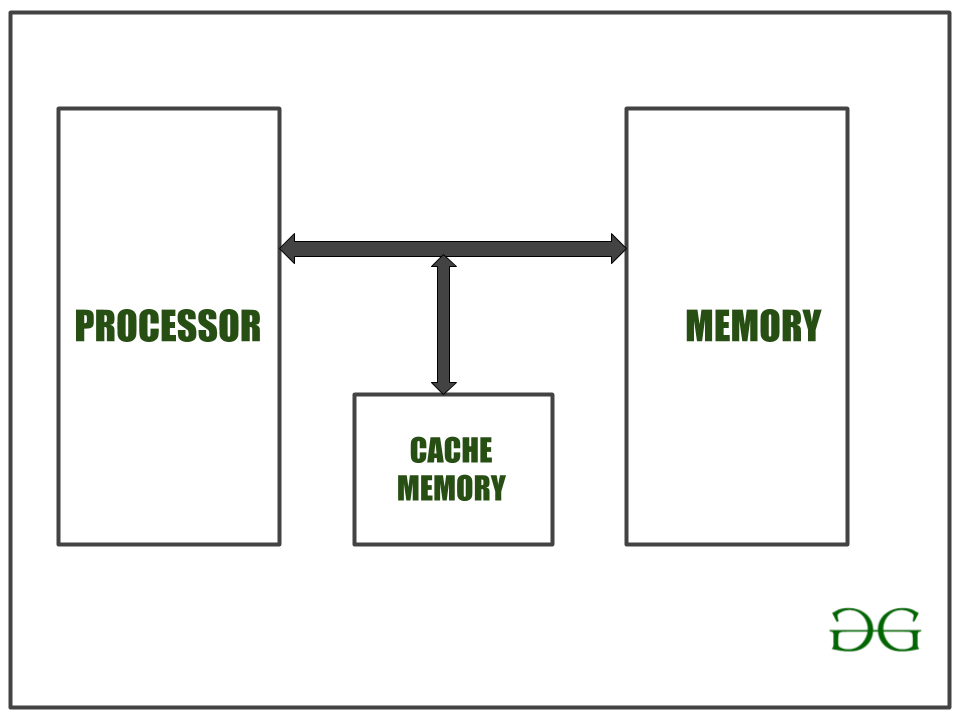

Over the period the processor speed got increased but the memory speed wasn’t. So the system performance was not increased even the processor speed was increased. To solve the problem designers have introduced high-speed SRAM in between Processor and Main Memory.

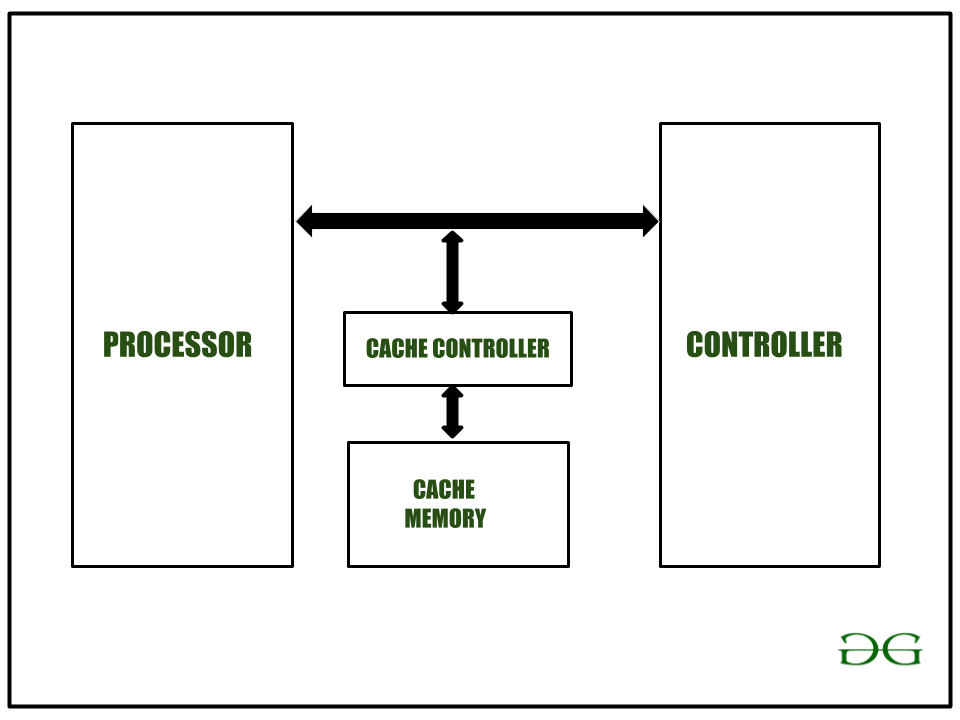

This Memory is called cache. Since the SRAM is expensive, the size of the SRAM is small. It stores only the most recent access from main memory. To store the most recent access and check whether the content is available in Cache the Cache Controller is included in the design.

The processor can disable the cache controller as a whole or it can instruct the Cache controller to cache or not to cache any specific block of memory.

Below an example of data storing in memory:

C

#include <stdio.h>

int main()

{

int i, j;

j = 0;

for (i = 0; i < 100; i++) {

j = j + i;

}

return 0;

}

|

Explanation:

In the above code, when the for loop is executed 100 times, the first time the variables are fetched from Main Memory and remaining 99 times the variables are fetched from Cache Memory. So the performance is improved. This is called Cache Hit and Cache Miss. In this case Cache hit is 99% and Cache miss is 1%. By default, the cache controller is enabled to improve the performance.

Let us discuss two cases:

Case 1: The main memory is shared between the Processor and a Controller.

In this case, if the cache is enabled for the block of memory shared by the Processor and Controller, and if the cache controller cache the block of the shared memory then updates by the controller in shared memory will not be known to the Processor. The processor will get the data from the Cache memory only. So this shared memory area needs to be disabled from the cache. So the processor needs to instruct the cache controller to do this job. A volatile qualifier does this job.

Case 2: The processor is reading & writing the controller registers.

In this case, if the controller registers changed due to do some internal function, that will be read by the processor. This Controller registers memory locations that need to disable from caching. In this case, also volatile qualifier is used.

Share your thoughts in the comments

Please Login to comment...