Scrapy – Command Line Tools

Last Updated :

29 Jun, 2021

Prerequisite: Implementing Web Scraping in Python with Scrapy

Scrapy is a python library that is used for web scraping and searching the contents throughout the web. It uses Spiders which crawls throughout the page to find out the content specified in the selectors. Hence, it is a very handy tool to extract all the content of the web page using different selectors.

To create a spider and make it crawl in Scrapy there are two ways, either we can create a directory which contains files and folders and write some code in one of such file and execute search command, or we can go for interacting with the spider through the command line shell of scrapy. So to interact in the shell we should be familiar with the command line tools of the scrapy.

Scrapy command-line tools provide various commands which can be used for various purposes. Let’s study each command one by one.

Creating a Scrapy Project

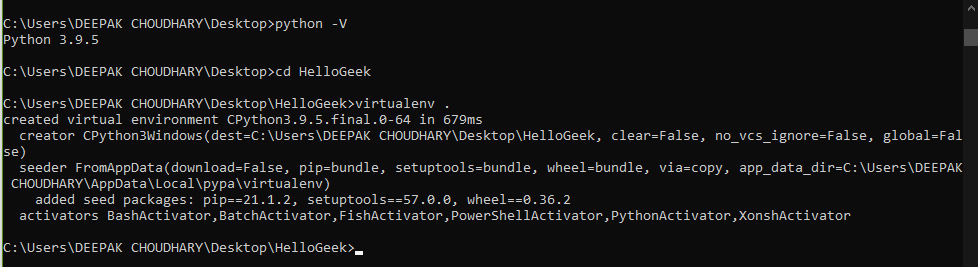

First, make sure Python is installed on your system or not. Then create a virtual environment.

Example:

Checking Python and Creating Virtualenv for scrapy directory.

We are using a virtual environment to save the memory since we globally download such a large package to our system then it will consume a lot of memory, and also we will not require this package a lot until if you are focused to go ahead with it.

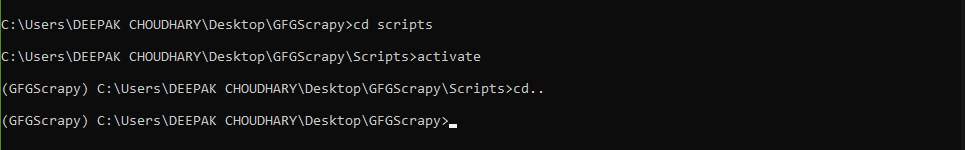

To activate the virtual environment just created we have to first enter the Scripts folder and then run the activate command

cd Scripts

activate

cd..

Example:

Activating the virtual environment

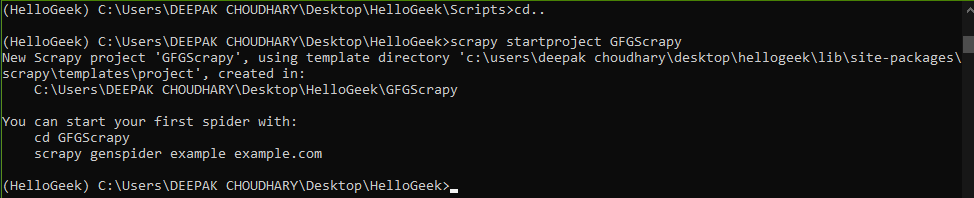

Then we have to run the below-given command to install scrapy from pip and then the next command to create scrapy project named GFGScrapy.

# This is the command to install scrapy in virtual env. created above

pip install scrapy

# This is the command to start a scrapy project.

scrapy startproject GFGScrapy

Example:

Creating the scrapy project

Now we’re going to create a spider in scrapy. To that spider, we should input the URL of the site which we want to Scrape.

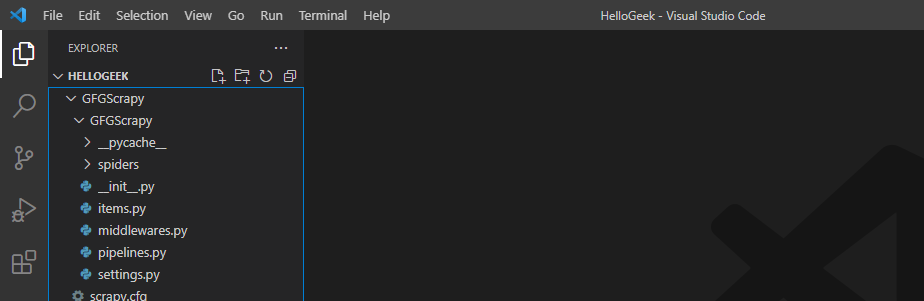

Directory structure

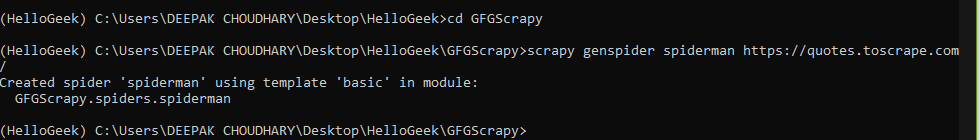

# change the directory to that where the scrapy project is made.

cd GFGScrapy

# input the URL

scrapy genspider spiderman https://quotes.toscrape.com/

Hence, we created a scrapy spider that crawls on the above-mentioned site.

Example:

Creating the spiders

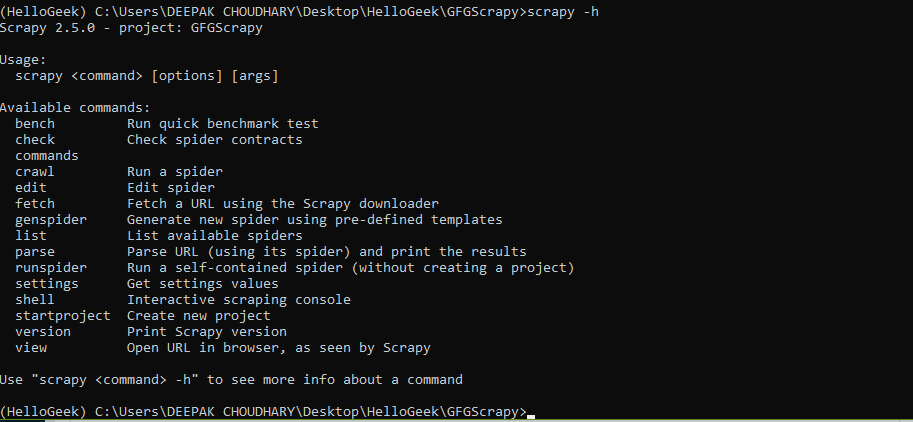

To see the list of available tools in scrapy or for any help about it types the following command.

Syntax:

scrapy -h

If we want more description of any particular command then type the given command.

Syntax:

scrapy <command> -h

Example:

These are the list of command line tools used in scrapy

The list of commands with their applications are discussed below:

- bench: This command is used to perform benchmark test means whether the scrapy software can run on the given system environment.

Syntax:

scrapy bench

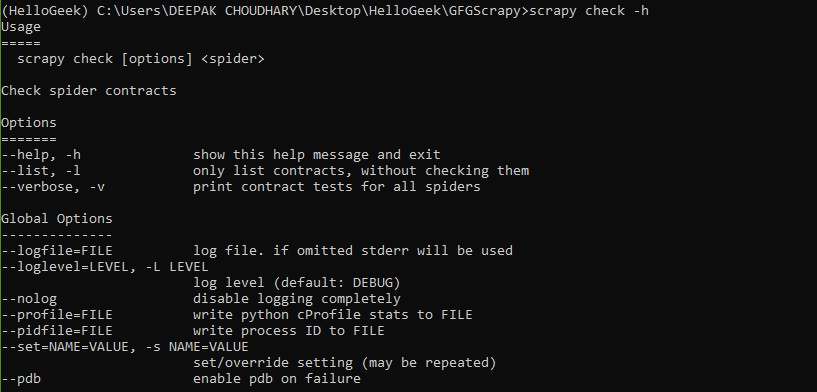

- check: Checks the spider contracts.

Syntax:

scrapy check [options] <spider>

Example:

Scrapy check command

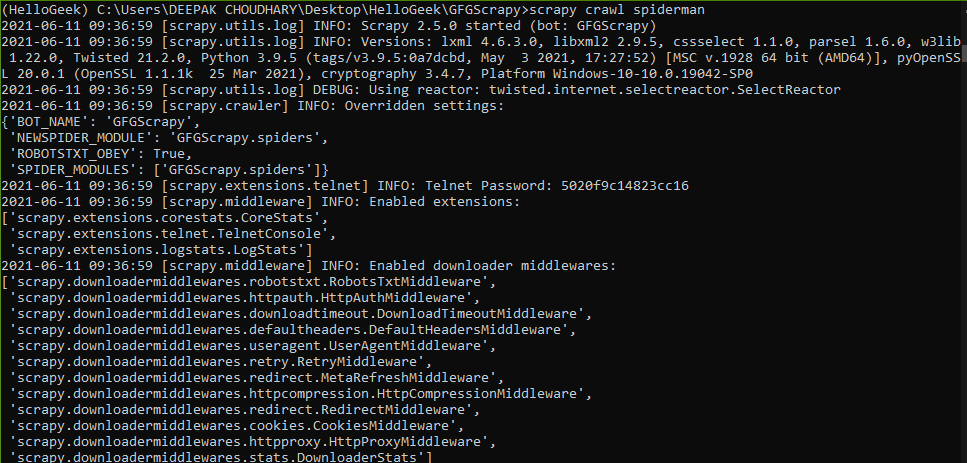

- crawl: This command is used to crawl spider through the specified URL and collect the data respectively.

Syntax:

scrapy crawl spiderman

Example:

Spider crawling through the web page

- edit and genspider: Both these command are used to either modify the existing spiders or creating a new spider respectively,

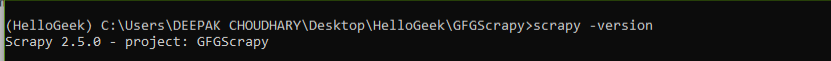

- version and view: These commands return the version of scrapy and the URL of the site as seen by the spider respectively.

Syntax:

scrapy -version

This command opens a new tab with the URL name of the HTML file where the specified URL’s data is kept,

Syntax:

scrapy view [url]

Example:

Version checking

- list, parse, and settings: As the name suggests they are used to create the list of available spiders, parse the URL of the spider mentioned, and setting the values in the settings.py file respectively.

Custom commands

Apart from all these default present command-line tools scrapy also provides the user a capability to create their own custom tools as explained below:

In the settings.py file we have an option to add custom tools under the heading named COMMANDS_MODULE.

Syntax :

COMMAND_MODULES = ‘spiderman.commands’

The format is <project_name>.commands where commands are the folder which contains all the commands.py files. Let’s create one custom command. We are going to make a custom command which is used to crawl the spider.

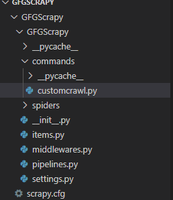

- First, create a commands folder which is the same directory where the settings.py file is.

Directory structure

- Next, we are going to create a .py file inside the commands folder named customcrawl.py file, which is used to write the work which our command will perform. Here the name of the command is scrapy customcrawl. In this file, we are going to use a class named Command which inherits from ScrapyCommand and contains three methods for the command to be created.

Program:

Python3

from scrapy.commands import ScrapyCommand

class Command(ScrapyCommand):

requires_project = True

def syntax(self):

return '[options]'

def short_desc(self):

return 'Runs the spider using custom command'

def run(self, args, opts):

spider = self.crawler_process.spiders.list()

self.crawler_process.crawl(spider[0], **opts.__dict__)

self.crawler_process.start()

|

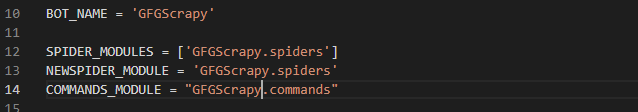

- Since now, we had created a commands folder and a customcrawl.py file inside it, now it’s time to give scrapy access to this command through the settings.py file.

So under the settings.py file mention a header named COMMANDS_MODULE and add the name of the commands folder as shown:

settings.py file

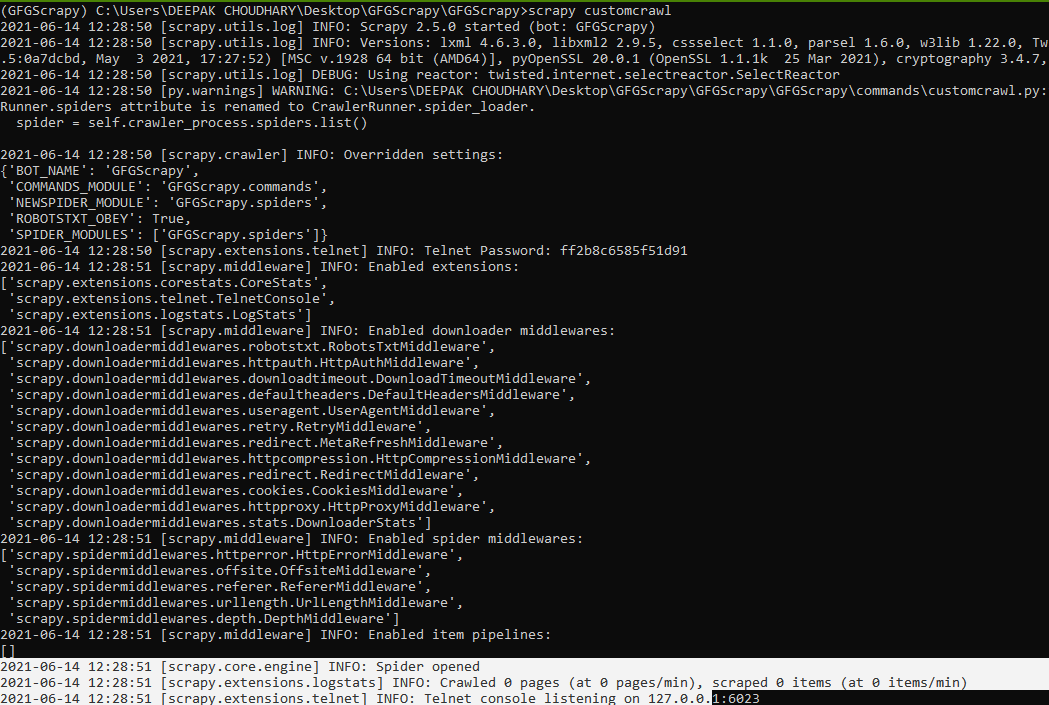

- Now it’s time to see the output

Syntax:

scrapy custom_command_file_name

Example:

Our custom command runs successfully

Hence, we saw how we can define a custom command and use it instead of using default commands too. We can also add commands to the library and import them in the section under setup.py file in scrapy.

Share your thoughts in the comments

Please Login to comment...