Convert PySpark dataframe to list of tuples

Last Updated :

18 Jul, 2021

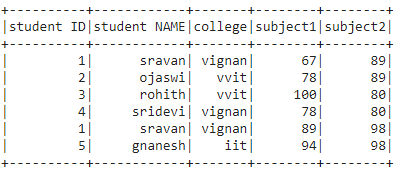

In this article, we are going to convert the Pyspark dataframe into a list of tuples.

The rows in the dataframe are stored in the list separated by a comma operator. So we are going to create a dataframe by using a nested list

Creating Dataframe for demonstration:

Python3

import pyspark

from pyspark.sql import SparkSession

spark = SparkSession.builder.appName('sparkdf').getOrCreate()

data = [["1", "sravan", "vignan", 67, 89],

["2", "ojaswi", "vvit", 78, 89],

["3", "rohith", "vvit", 100, 80],

["4", "sridevi", "vignan", 78, 80],

["1", "sravan", "vignan", 89, 98],

["5", "gnanesh", "iit", 94, 98]]

columns = ['student ID', 'student NAME',

'college', 'subject1', 'subject2']

dataframe = spark.createDataFrame(data, columns)

dataframe.show()

|

Output:

Method 1: Using collect() method

By converting each row into a tuple and by appending the rows to a list, we can get the data in the list of tuple format.

tuple(): It is used to convert data into tuple format

Syntax: tuple(rows)

Example: Converting dataframe into a list of tuples.

Python3

l=[]

for i in dataframe.collect():

l.append(tuple(i))

print(l)

|

Output:

[(‘1’, ‘sravan’, ‘vignan’, 67, 89), (‘2’, ‘ojaswi’, ‘vvit’, 78, 89),

(‘3’, ‘rohith’, ‘vvit’, 100, 80), (‘4’, ‘sridevi’, ‘vignan’, 78, 80),

(‘1’, ‘sravan’, ‘vignan’, 89, 98), (‘5’, ‘gnanesh’, ‘iit’, 94, 98)]

Method 2: Using tuple() with rdd

Convert rdd to a tuple using map() function, we are using map() and tuple() functions to convert from rdd

Syntax: rdd.map(tuple)

Example: Using RDD

Python3

rdd = dataframe.rdd

data = rdd.map(tuple)

data.collect()

|

Output:

[('1', 'sravan', 'vignan', 67, 89),

('2', 'ojaswi', 'vvit', 78, 89),

('3', 'rohith', 'vvit', 100, 80),

('4', 'sridevi', 'vignan', 78, 80),

('1', 'sravan', 'vignan', 89, 98),

('5', 'gnanesh', 'iit', 94, 98)]

Share your thoughts in the comments

Please Login to comment...