Virtually Indexed Physically Tagged (VIPT) Cache

Last Updated :

07 Oct, 2021

Prerequisites:

- Cache Memory

- Memory Access

- Paging

- Transition Look Aside Buffer

Revisiting Cache Access

When a CPU generates physical address, the access to main memory precedes with access to cache. Data is checked in cache by using the tag and index/set bits as show here. Such cache where the tag and index bits are generated from physical address is called as a Physically Indexed and Physically Tagged(PIPT) cache. When there is a cache hit, the memory access time is reduced significantly.

Cache Hit

Average Memory Access Time =

Hit Time + Miss Rate* Miss Penalty

Here, Hit Time= Cache Hit Time= Time it takes to access a memory location in the cache

Miss Penalty= time it takes to load a cache line from main memory into cache

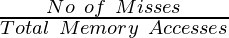

Miss Rate=  over a long period of time

over a long period of time

In today’s systems, CPU generates a logical address(also called virtual address) for a process. When using a PIPT cache, the logical address needs to be converted to its corresponding physical address before the PIPT cache can be searched for data. This conversion from logical address to physical address includes the following steps:

- Check the logical address in the Transition Look Aside Buffer(TLB) and If it is present in TLB, get the physical address of page from the TLB.

- If it is not present, access the page table from physical memory and then use the page table to obtain the physical address.

All this time adds to the hit time. Hence, hit time = TLB latency + cache latency

TLB and PIPT Cache: Hit and Miss

Limitations of PIPT Cache

- This process is quite sequential hence high hit time.

- Not ideal for inner level caches.

- Data cache is accessed frequently by any system and TLB access for data every time will slow the system significantly.

Virtually Indexed Virtually Tagged Cache

An immediate solution appears to be a Virtually Indexed Virtually Tagged(VIVT) cache. VIVT cache directly checks the data in cache and fetch it without translating it to physical address reducing the hit time significantly.

In such a cache, the tag and index will be a part of the logical/ virtual address generated by CPU. Now the address generated directly by the CPU can be used to fetch the data reducing the hit time significantly.

Only if the data is not present in cache, TLB will be checked and finally after converting to physical address, the data will be brought into the VIVT cache. Hence, hit time for VIVT = cache hit time.

VIVT Cache Hit and Miss

Limitations of VIVT Cache:

- The TLB contains important flags like the dirty bit and invalid bit so even with VIVT cache, TLB needs to be checked anyways.

- Lots of cache misses on context switch: Since the cache is specific to logical address and each process has its own logical address space, two process can use the same address but refer to different data.

Remember that this is the same reason for having a page table for every process. This means that for every context switch, the cache needs to be flushed and every context switch follows with a lot of cache misses both of which is time-consuming and adds to the hit time.

A solution to these problems is Virtually Indexed Physically Tagged Cache (VIPT Cache). The next sections in the article covers VIPT Cache, challenge in VIPT Cache and some solutions

Virtually Indexed Physically Tagged Cache (VIPT)

The VIPT cache uses tag bits from physical address and index as index from logical/ virtual address. The cache is searched using the virtual address and tag part of physical address is obtained. The TLB is searched with virtual address, and physical address is obtained. Finally, the tag part of physical address obtained from the VIPT cache is compared with physical address’s tag obtained from TLB. If they both are same, then it is a cache hit else a cache miss.

VIPT Cache Hit and Miss

Since TLB is smaller in size than cache, TLB’s access time will be lesser than Cache’s access time. Hence, hit time= cache hit time.

VIPT cache takes same time as VIVT cache during a hit and solves the problem VIVT cache:

- Since the TLB is also accessed in parallel the flags can be checked at the same time.

- The VIPT cache uses part of physical address as index and since every memory access in the system will correspond to a unique physical address, data for multiple processes can exist in the cache and hence no need to flush data for every context switch.

A problem that can be thought of is the case when there is a cache hit but a TLB miss which will require a memory access to the page table in physical memory. This happens very rarely since TLB stores only bits(address and flags) as its entries which requires very less space as compared to a whole block(several bytes) of memory in case of cache. Hence, the number of entries in TLB is way more than the cache blocks in cache i.e. entries in TLB  entries in tag directory of cache.

entries in tag directory of cache.

Advantages of Virtually Indexed Physically Tagged Cache

- Avoids sequential access reducing the hit time

- Useful in data caches where the access to cache is frequent

- Avoids cache misses on context switch

- TLB flags can be accessed in parallel with cache access

Challenge in Virtually Indexed Physically Tagged Cache

Virtually indexing in VIPT cache causes a challenge. A process can have two virtual addressed mapped to the same physical location. This can be done using mmap in linux:

mmap(virtual_addr_A,4096,file_descriptor,offset)

mmap(virtual_addr_B,4096,file_descriptor,offset)

The above two lines map file pointed by file_descriptor to two different virtual addresses but the same physical address. Since these two virtual addresses are different and cache is virtually indexed, both these locations may get indexed to different locations in the cache. This will lead to having two copies of the data block and when these locations are updated, the data will be inconsistent. This problem is called Aliasing. Aliasing can also happen with a shared block by two processes.

Following are the four solutions to solve the problem:

- The first solution requires invalidating the any other copy of data in cache when updating a memory location.

- The second solution would be to update every other copy of data in the cache when updating a memory location

Note that the first and second solution requires checking if any other location maps to the same physical memory location. This requires translating the virtual address to physical address; but VIPT was aimed to avoid translation as it adds up to the hit latency. - The third solution involves reducing the cache size. Remember that during translation of virtual address to physical address the page offset of a virtual address is same as that of physical address. This means that the two virtual address will also have the same page offset. To make sure that the two virtual addresses are mapped to the same index in the cache, the index field must be completely in the page offset part.

Example demonstrating Aliasing:

Consider a system with:

32 bit virtual address,

block size 16 Bytes and page size 4 KB

(i) Direct Mapped Cache: 64 KB

(ii) Direct Mapped Cache: 4 KB

(iii) Direct Mapped Cache: 2 KB

(i) In the 32-bit virtual address, for a 64KB direct mapped cache,

bits 15 to 4 are for index,

bits 11 to 0 are page offset(which means for any two VA pointing to the same PA, 12 LSB bits will be the same).

6 KB Direct mapped cache

Two virtual addresses can be 0x0045626E and FF21926E mapped to the same physical address. The first address will get indexed to (626)H cache line and second address will get indexed to (926)H. This shows aliasing happens with a 64KB direct mapped cache for the system. Also note that the last 12 bits are same in both the addressed since they have mapped to the same physical addressed and last 12 bits form the page offset which will be same for both the virtual addresses.

(ii) In the 32-bit virtual address, for a 4KB direct mapped cache, bits 11 to 4 are for index, bits 11 to 0 are page offset(which means for any two VA pointing to the same PA, 12 LSB bits will be the same).

4 KB Direct mapped cache

Two addresses can be 0x12345678 and 0xFEDCB678. Note that the 12 LSB bits are same i.e. (678)H . Since for a 2KB direct mapped cache, the index bits are completely a part of the same page offset, the index of cache where both the virtual addresses will map will be the same. Hence, only one copy stays at a time and no aliasing occurs.

(iii) Following is a representation for a 2KB direct mapped cache for the system showing no aliasing. Hence, any direct mapped cache of size smaller or equal to 4KB will cause no aliasing.

2 KB Direct mapped cache

4. The third solution to aliasing requires the cache to be smaller, smaller cache will cause more cache misses. Solutions 1,

2 and 3 are hardware based. Yet another solution called page color/ cache coloring is a software solution

implemented by the operating system to solve aliasing without limiting the cache size. In case of 64KB cache 16

colors are needed to ensure no aliasing happens. Further, read: Page Coloring on ARMv6

Like Article

Suggest improvement

Share your thoughts in the comments

Please Login to comment...