Software Testing Metrics, its Types and Example

Last Updated :

16 Jan, 2024

Software testing metrics are quantifiable indicators of the software testing process progress, quality, productivity, and overall health. The purpose of software testing metrics is to increase the efficiency and effectiveness of the software testing process while also assisting in making better decisions for future testing by providing accurate data about the testing process. A metric expresses the degree to which a system, system component, or process possesses a certain attribute in numerical terms. A weekly mileage of an automobile compared to its ideal mileage specified by the manufacturer is an excellent illustration of metrics. Here, we discuss the following points:

Importance of Metrics in Software Testing:

Test metrics are essential in determining the software’s quality and performance. Developers may use the right software testing metrics to improve their productivity.

- Early Problem Identification: By measuring metrics such as defect density and defect arrival rate, testing teams can spot trends and patterns early in the development process.

- Allocation of Resources: Metrics identify regions where testing efforts are most needed, which helps with resource allocation optimization. By ensuring that testing resources are concentrated on important areas, this enhances the strategy for testing as a whole.

- Monitoring Progress: Metrics are useful instruments for monitoring the advancement of testing. They offer insight into the quantity of test cases that have been run, their completion rate, and if the testing effort is proceeding according to plan.

- Continuous Improvement: Metrics offer input on the testing procedure, which helps to foster a culture of continuous development.

Types of Software Testing Metrics:

Software testing metrics are divided into three categories:

- Process Metrics: A project’s characteristics and execution are defined by process metrics. These features are critical to the SDLC process’s improvement and maintenance (Software Development Life Cycle).

- Product Metrics: A product’s size, design, performance, quality, and complexity are defined by product metrics. Developers can improve the quality of their software development by utilizing these features.

- Project Metrics: Project Metrics are used to assess a project’s overall quality. It is used to estimate a project’s resources and deliverables, as well as to determine costs, productivity, and flaws.

It is critical to determine the appropriate testing metrics for the process. A few points to keep in mind:

- Before creating the metrics, carefully select your target audiences.

- Define the aim for which the metrics were created.

- Prepare measurements based on the project’s specific requirements. Assess the financial gain associated with each statistic.

- Match the measurements to the project lifestyle phase for the best results.

The major benefit of automated testing is that it allows testers to complete more tests in less time while also covering a large number of variations that would be practically difficult to calculate manually.

Manual Test Metrics: What Are They and How Do They Work?

Manual testing is carried out in a step-by-step manner by quality assurance experts. Test automation frameworks, tools, and software are used to execute tests in automated testing. There are advantages and disadvantages to both human and automated testing. Manual testing is a time-consuming technique, but it allows testers to deal with more complicated circumstances. There are two sorts of manual test metrics:

1. Base Metrics: Analysts collect data throughout the development and execution of test cases to provide base metrics. By generating a project status report, these metrics are sent to test leads and project managers. It is quantified using calculated metrics.

- The total number of test cases

- The total number of test cases completed.

2. Calculated Metrics: Data from base metrics are used to create calculated metrics. The test lead collects this information and transforms it into more useful information for tracking project progress at the module, tester, and other levels. It’s an important aspect of the SDLC since it allows developers to make critical software changes.

Other Important Metrics:

The following are some of the other important software metrics:

- Defect metrics: Defect metrics help engineers understand the many aspects of software quality, such as functionality, performance, installation stability, usability, compatibility, and so on.

- Schedule Adherence: Schedule Adherence’s major purpose is to determine the time difference between a schedule’s expected and actual execution times.

- Defect Severity: The severity of the problem allows the developer to see how the defect will affect the software’s quality.

- Test case efficiency: Test case efficiency is a measure of how effective test cases are at detecting problems.

- Defects finding rate: It is used to determine the pattern of flaws over a period of time.

- Defect Fixing Time: The amount of time it takes to remedy a problem is known as defect fixing time.

- Test Coverage: It specifies the number of test cases assigned to the program. This metric ensures that the testing is completed completely. It also aids in the verification of code flow and the testing of functionality.

- Defect cause: It’s utilized to figure out what’s causing the problem.

Test Metrics Life Cycle:

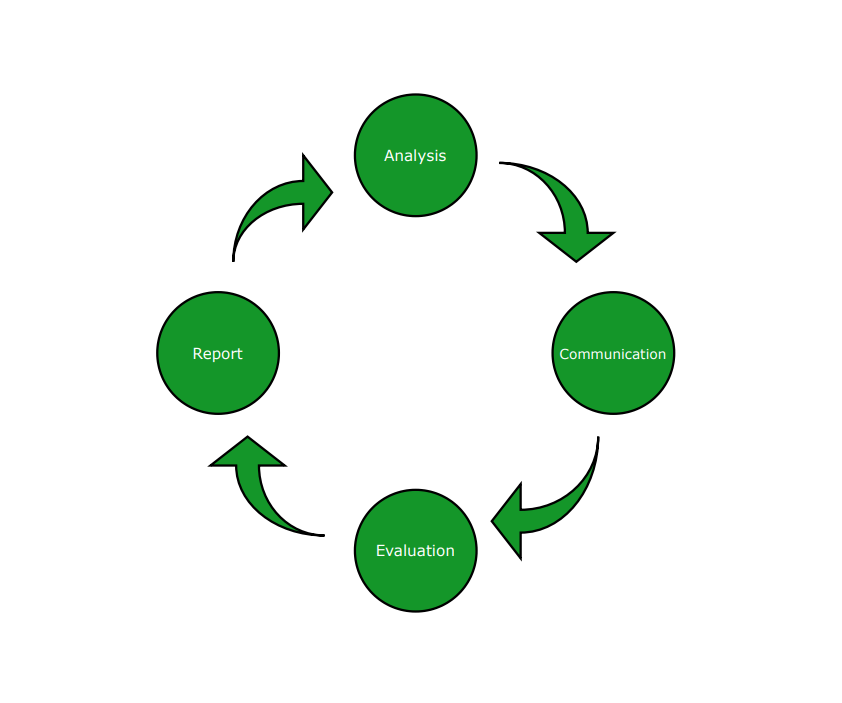

The below diagram illustrates the different stages in the test metrics life cycle.

Test Metrics Lifecycle

The various stages of the test metrics lifecycle are:

- Analysis:

- The metrics must be recognized.

- Define the QA metrics that have been identified.

- Communicate:

- Stakeholders and the testing team should be informed about the requirement for metrics.

- Educate the testing team on the data points that must be collected in order to process the metrics.

- Evaluation:

- Data should be captured and verified.

- Using the data collected to calculate the value of the metrics

- Report:

- Create a strong conclusion for the paper.

- Distribute the report to the appropriate stakeholder and representatives.

- Gather input from stakeholder representatives.

To get the percentage execution status of the test cases, the following formula can be used:

Percentage test cases executed = (No of test cases executed / Total no of test cases written) x 100

Similarly, it is possible to calculate for other parameters also such as test cases that were not executed, test cases that were passed, test cases that were failed, test cases that were blocked, and so on. Below are some of the formulas:

1. Test Case Effectiveness:

Test Case Effectiveness = (Number of defects detected / Number of test cases run) x 100

2. Passed Test Cases Percentage: Test Cases that Passed Coverage is a metric that indicates the percentage of test cases that pass.

Passed Test Cases Percentage = (Total number of tests ran / Total number of tests executed) x 100

3. Failed Test Cases Percentage: This metric measures the proportion of all failed test cases.

Failed Test Cases Percentage = (Total number of failed test cases / Total number of tests executed) x 100

4. Blocked Test Cases Percentage: During the software testing process, this parameter determines the percentage of test cases that are blocked.

Blocked Test Cases Percentage = (Total number of blocked tests / Total number of tests executed) x 100

5. Fixed Defects Percentage: Using this measure, the team may determine the percentage of defects that have been fixed.

Fixed Defects Percentage = (Total number of flaws fixed / Number of defects reported) x 100

6. Rework Effort Ratio: This measure helps to determine the rework effort ratio.

Rework Effort Ratio = (Actual rework efforts spent in that phase/ Total actual efforts spent in that phase) x 100

7. Accepted Defects Percentage: This measures the percentage of defects that are accepted out of the total accepted defects.

Accepted Defects Percentage = (Defects Accepted as Valid by Dev Team / Total Defects Reported) x 100

8. Defects Deferred Percentage: This measures the percentage of the defects that are deferred for future release.

Defects Deferred Percentage = (Defects deferred for future releases / Total Defects Reported) x 100

Example of Software Test Metrics Calculation:

Let’s take an example to calculate test metrics:

| 1 |

No. of requirements |

5 |

| 2 |

The average number of test cases written per requirement |

40 |

| 3 |

Total no. of Test cases written for all requirements |

200 |

| 4 |

Total no. of Test cases executed |

164 |

| 5 |

No. of Test cases passed |

100 |

| 6 |

No. of Test cases failed |

60 |

| 7 |

No. of Test cases blocked |

4 |

| 8 |

No. of Test cases unexecuted |

36 |

| 9 |

Total no. of defects identified |

20 |

| 10 |

Defects accepted as valid by the dev team |

15 |

| 11 |

Defects deferred for future releases |

5 |

| 12 |

Defects fixed |

12 |

1. Percentage test cases executed = (No of test cases executed / Total no of test cases written) x 100

= (164 / 200) x 100

= 82

2. Test Case Effectiveness = (Number of defects detected / Number of test cases run) x 100

= (20 / 164) x 100

= 12.2

3. Failed Test Cases Percentage = (Total number of failed test cases / Total number of tests executed) x 100

= (60 / 164) * 100

= 36.59

4. Blocked Test Cases Percentage = (Total number of blocked tests / Total number of tests executed) x 100

= (4 / 164) * 100

= 2.44

5. Fixed Defects Percentage = (Total number of flaws fixed / Number of defects reported) x 100

= (12 / 20) * 100

= 60

6. Accepted Defects Percentage = (Defects Accepted as Valid by Dev Team / Total Defects Reported) x 100

= (15 / 20) * 100

= 75

7. Defects Deferred Percentage = (Defects deferred for future releases / Total Defects Reported) x 100

= (5 / 20) * 100

= 25

Conclusion:

Metrics are crucial to software testing in order to assess, manage and enhance the process. They offer teams useful information that helps them make better decisions, improves the quality of the programme and advances the software development lifecycle as a whole.

Like Article

Suggest improvement

Share your thoughts in the comments

Please Login to comment...