Scheduling Cron Job on Google Cloud

Last Updated :

13 Feb, 2022

Python is often called a glue language because it’s a powerful tool for easily combining multiple different systems. Part of this is because Python has great client library support, which lets you interact with lots of APIs, products, and services from the comfort of Python. But it doesn’t just end with the cloud. Python is useful for combining just about anything with anything else.

A Cron Job is a Linux command used for scheduling tasks to run on the server. They are most commonly used in automation.In this article we will look into the steps of Scheduling a cron job in python on Google cloud storage.

As an example we will be writing a python script that gets the top stories from the Hacker News API, then iterate over every story. If any of their titles match serverless, it’ll send you an email with the links. We’ll use the request library to make an HTTP request to the URL for the API, which happens to be hosted on Firebase, a Google Cloud product.

The first step would be writing a Python script,

Python3

from request import get

top_stories_url = api_url + 'topstories.json'

item_url = api_url + 'item/{}.json'

|

Then if any of the stories match, we’ll use some custom code to send an email formatted with all of the “serverless” stories. We can put this all in a single Python function as shown below:

Python3

from utils import send_email

def check_serverless_stories(request):

top_stories = request.get(top_stories_url).json

serverless_stories = []

...

send_email(serverless_stories)

if serverless_stories:

send_email(serverless_stories)

|

We can then deploy this app to Cloud Functions using the below commands:

$ gcloud functions deploy check_serverless_stories \

--runtime python38

--trigger-http

After deploying, we get a public URL for our function. So whenever we visit this URL it will email us any new articles on the front page of the Hackernews.com.

And the last step is running it on a schedule. For this, we use Cloud Scheduler to create a new job, which runs once a day.

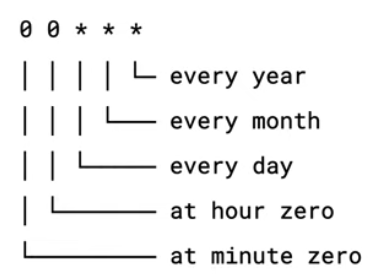

Cron jobs are scheduled at recurring intervals specified using the Unix cron format. You can define the schedule so that your job runs multiple times a day, or runs on specific days and months.

We’ll schedule our job to run once a day at midnight. We’ll use the gcloud scheduler jobs create command to create a job which calls our function on the schedule as shown below:

$ gcloud scheduler jobs create http email_job \

--schedule = "0 0 * * *" \

--uri = https://YOUR-REGION-project.cloudfunctions.net/check_serverless_stories

We can use gcloud scheduler jobs list to confirm that our job was created using the below command:

$ gcloud scheduler jobs list

And we can use gcloud scheduler jobs run to run it once regardless of the schedule as below:

$ gcloud scheduler jobs run email_job

This gets you any serverless articles delivered right to your inbox every day without you having to do a thing.

Like Article

Suggest improvement

Share your thoughts in the comments

Please Login to comment...