Saving a machine learning Model

Last Updated :

11 Jan, 2023

In machine learning, while working with scikit learn library, we need to save the trained models in a file and restore them in order to reuse them to compare the model with other models, and to test the model on new data. The saving of data is called Serialization, while restoring the data is called Deserialization.

Also, we deal with different types and sizes of data. Some datasets are easily trained i.e- they take less time to train but the datasets whose size is large (more than 1GB) can take a very large time to train on a local machine even with GPU. When we need the same trained data in some different project or later sometime, to avoid the wastage of the training time, store the trained model so that it can be used anytime in the future.

There are two ways we can save a model in scikit learn:

Way 1: Pickle string:

The pickle module implements a fundamental, but powerful algorithm for serializing and de-serializing a Python object structure.

Pickle model provides the following functions –

- pickle.dump to serialize an object hierarchy, you simply use dump().

- pickle.load to deserialize a data stream, you call the loads() function.

Example: Let’s apply K Nearest Neighbor on the iris dataset and then save the model.

Python3

from sklearn.neighbors import KNeighborsClassifier as KNN

import numpy as np

from sklearn.datasets import load_iris

iris = load_iris()

X = iris.data

y = iris.target

X_train, X_test, y_train, y_test = \

train_test_split(X, y, test_size=0.3,

random_state=2018)

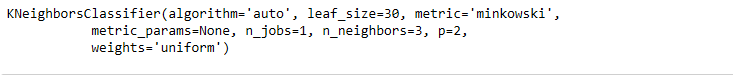

knn = KNN(n_neighbors=3)

knn.fit(X_train, y_train)

|

Output:

Save a model to string using pickle:

Python3

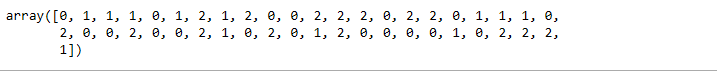

import pickle

saved_model = pickle.dumps(knn)

knn_from_pickle = pickle.loads(saved_model)

knn_from_pickle.predict(X_test)

|

Output:

Way 2: Pickled model as a file using joblib:

Joblib is the replacement of pickle as it is more efficient on objects that carry large numpy arrays. These functions also accept file-like object instead of filenames.

joblib.dump to serialize an object hierarchy

joblib.load to deserialize a data stream

from joblib import parallel, delayed

Save to pickled file using joblib

Python3

from joblib import Parallel, delayed

import joblib

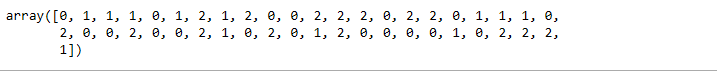

joblib.dump(knn, 'filename.pkl')

knn_from_joblib = joblib.load('filename.pkl')

knn_from_joblib.predict(X_test)

|

Output:

Like Article

Suggest improvement

Share your thoughts in the comments

Please Login to comment...