Project Idea | Audio to Sign Language Translator

Last Updated :

13 Sep, 2019

Overview

Sign language is a visual language that is used by deaf people as their mother tongue. Unlike acoustically conveyed sound patterns, sign language uses body language

and manual communication to fluidly convey the thoughts of a person. It is achieved by simultaneously combining hand shapes, orientation and movement of the hands, arms or

body, and facial expressions. It can be used by a person who has difficulties in speaking or by a person who can hear but could not speak and by normal people to communicate with hearing disabled people. As far as a deaf person is concerned, having access to a sign language is very important for their social, emotional and linguistic growth. Sign language should be recognized as the first language of deaf people and their education can be

proceeded bilingually in the national sign language as well as national written or spoken language.

Indian Sign Language is used by deaf and hard of hearing people for communication by showing signs using different parts of body. All around the world there

are different communities of deaf people and thus the language of these communities will be different. The Sign Language used in USA is American Sign Language

(ASL); British Sign Language (BSL) is used in Britain; and Indian Sign Language (ISL) is used in India for expressing thoughts and communicating with each other. The “Indian Sign Language (ISL)” uses manual communication and body language (non-manual communication) to convey thoughts, ideas or feelings. ISL signs can be generally classified

into three classes: One handed, two handed, and non-manual signs. One handed signs and two handed signs are also called manual signs where the signer uses his/her hands to

make the signs for conveying the information. Non Manual signs are generated by changing the body posture and facial expressions. This system is to help hearing impaired

people in India interact with others as it translates English text to Sign language.

Goals

1. To provide information access and services to deaf people in Indian sign language.

2. To develop a scalable project which can be extended to capture whole vocabulary of ISL through manual and non manual signs.

Technical Specifications

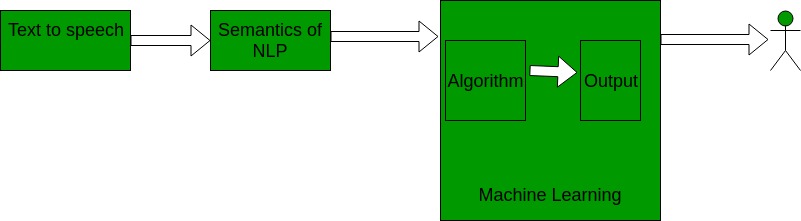

This project is based on converting the audio signals received to text using speech to text api (python modules or google api) and then using the semantics of Natural Language Processing to breakdown the text into smaller understandable pieces which requires.

Machine Learning as a part. Data sets of predefined sign language are used as the input so that the software can use artificial Intelligence to display the converted audio into the sign

language.

AI (Artificial Intelligence) – It is the theory and development of computer systems to be able to perform tasks normally requiring human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages.

ML (Machine Learning) – Machine learning is the science of getting computers to act without being explicitly programmed. The Inputs are given as data sets by which the system learns and tries to give the best possible outcome to the user.

NLP (Natural Language Processing) – It is the application of computational techniques to the analysis and synthesis of natural language and speech.

Platform Used:

Software Implemented through Python

A desktop application is implemented using python programming language. Python

includes libraries such as pyaudio to convert speech to text.

– Python 2.7.x is preferred.

– Pycharm community edition compiler.

– Operating System – Ubuntu (Linux).

– ISL/ASL data sets from google.

Methodology

1. Audio input on a Personal Digital Assistant(PDA) using python PyAudio module.

2. Conversion of audio to text using Google Speech API.

3. Dependency parser for analysing grammatical structure of the sentence and establishing relationship between words.

4. ISL Generator: ISL of input sentence using ISL grammar rules.

5. Generation of Sign language with signing Avatar.

Future Scope

1. Since deaf people are usually deprived of normal communication with other people, they have to rely on an interpreter or some visual communication. Now the interpreter can not be available always, so this project can help eliminate the dependency on the interpreter.

2. The system can be extended to incorporate the knowledge of facial expressions and body language too so that there is a complete understanding of the context and tone of the input speech.

3. A mobile and web based version of the application will increase the reach to more people.

4. Integrating hand gesture recognition system using computer vision for establishing 2-way communication system.

Like Article

Suggest improvement

Share your thoughts in the comments

Please Login to comment...