Omniglot Classification Task

Last Updated :

30 May, 2021

Let us first define the meaning of Omniglot before getting in-depth into the classification task.

Omniglot Dataset: It is a dataset containing 1623 characters from 50 different alphabets, each one hand-drawn by a group of 20 different people. This dataset was created for the study of how humans and machines adapt to one-shot learning, i.e. learning a task from just one example.

Source : https://paperswithcode.com/dataset/omniglot-1

The omniglot dataset has been prepared in such a way that each character in the above image has been written by 20 different people.

Omniglot Challenge: The omniglot challenge released to the Machine Learning and AI community is focused on 5 major tasks:

- One Shot Classification

- Parsing

- Generating new exemplars

- Generating new concepts (from type)

- Generating new concepts (unconstrained)

In this article, we will be focusing on the classification task. We will use the one-shot classification technique to perform this task. Before getting into the classification technique, let us understand the intuition behind One-Shot Learning.

One-Shot Learning

When a human sees an object for the first time, they are able to remember a few features of that object. So, whenever they see some other object of the same kind, they are able to identify it immediately. Even if they have seen that object only once, humans are able to associate it with that class. This is known as One-Shot Learning. Let us take an example.

Suppose a person has seen a fridge for the first time on television and wishes to buy it. When that person visits the electronics store, he/she is able to immediately identify which appliance is a fridge and which is not. This principle of the human brain is known as One-Shot Learning.

As per definition, One-shot learning is a technique used by machines to classify new objects into different classes provided that it has only seen one object from each class during its training.

Intuition:

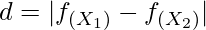

One-shot learning uses the Siamese Neural Network model. Here, the objective of the model is find the ‘degree of similarity‘(d) between 2 images. We find d by using the following formula:

where,

d -> degree of similarity

f(xi) -> 128-D feature vector

Now, we find the 128-D feature vector of each image by passing it through a CNN model. This way we obtain all the key features present in a particular image. (As shown in Fig. 2)

Fig 2: Extracting 128-D FV from Input Image

Training Phase:

During the training phase of Siamese Network model, we use the triplet loss function. In this, we take a 128-D feature vector of two similar images (Positive and Anchor) and one different image from the dataset (Negative).

We train the model based on the set of 3 images where the similarity distance between the two similar images is quite small. So, the smaller the distance, the more similar the two images are. (as shown in Fig 3 – Step 7)

Fig 3: One Shot Learning Siamese Networks

Fig 3 shows the steps involved in properly implementing the Siamese Network Model.

Testing Phase:

As discussed above, the triplet loss function uses multiple combinations of 3 images (the anchor, the positive and the negative) for training. During this phase, we will be looking at different ways of one-shot classification with varying sizes of the support set.

N Support Set: It is a set of N images that are matched with the newly received image during the testing phase.

We find the similarity distance d, between each image in the support set and the new input image. The image that shows the most similarity (the smallest value of d) determines the class the new image will be classified into.

Types of N-way classifications

These are some N-way classification versions given below:

- 4-Way one-shot classification

- 10-Way one-shot classification

- 20-Way one-shot classification

Refer to Fig 4 for a better understanding of the working of N-way classification.

Fig 4 : Testing via N-way one Shot Classification

As shown in the above image, in an N-way one-shot classification, there will be one image that will be the most similar to the input test image compared to the rest of the (N-1) pair of images. We can set the value of N as per our need. We must understand the importance of choosing an optimal value of N here.

Importance of N:

As the value of N increases, the complexity of the support set also increases. So, there is a higher probability of the Test Image falling into the wrong class. If the value of N is small, the features of the images in the support set are easily distinguishable. Hence, making the classification task easier.

So, as per our requirement, we much choose an appropriate N-way one-shot classification model during the testing phase to get the optimum results. For any doubt/query, comment below.

Like Article

Suggest improvement

Share your thoughts in the comments

Please Login to comment...