Faster R-CNN | ML

Last Updated :

23 Aug, 2023

Identifying and localizing objects within images or video streams is one of the key tasks in computer vision. With the arrival of deep learning, there is significant growth in this field. Faster R-CNN, a major breakthrough, has reshaped how objects are detected and categorized in real-world images. It is the advancement of R-CNN architecture. The R-CNN family operates in the following phases:

- Region proposal networks to identify possible locations in an image where objects might be present.

- CNN to extract important features.

- Classification to predict the class.

- Regression to fine-tune object bounding box coordinates

Both R-CNN and Fast R-CNN use CPU-based algorithms, which is slower because it runs on CPU computation. Faster R-CNN uses deep learning-based CNN architecture and attention to generate the regions proposals, which significantly reduces the time and also improves the accuracy of object detections and localizations in images. In this post, we will look into the technical details of Faster R-CNN, learning about its architecture, benefits, and applications.

Faster R-CNN

Faster R-CNN short for “Faster Region-Convolutional Neural Network” is a state-of-the-art object detection architecture of the R-CNN family, introduced by Shaoqing Ren, Kaiming He, Ross B. Girshick, and Jian Sun in 2015. The primary goal of the Faster R-CNN network is to develop a unified architecture that not only detects objects within an image but also locates the objects precisely in the image. It combines the benefits of deep learning, convolutional neural networks (CNNs), and region proposal networks(RPNs) into a cohesive network, which significantly improves the speed and accuracy of the model.

Faster R-CNN architecture consists of two components

- Region Proposal Network (RPN)

- Fast R-CNN detector

Faster R-CNN

Before discussing the RPN and Fast R-CNN detector, Let’s understand the Shared Convolutional Layers that works as the backbone in Faster R-CNN architecture. It is the common CNN layer used for both RPN and Fast R-CNN detector as shown in the figure.

Convolutional Neural Network (CNN) Backbone

The Convolutional Neural Network (CNN) Backbone is the starting layers of Faster R-CNN architecture. The input image is passed through a CNN backbone (e.g., ResNet, VGG) to extract feature maps. These feature maps capture different levels of visual information from the image. Which is further used by Region Proposal Network (RPN) and Fast R-CNN detector. Let’s understand the role of Convolutional Neural Network (CNN) Backbone in detatils

- The primary objective of CNN is to extract the relevant features from the input image. It consists of multiple convolutions layers that apply different-different convolutions kernel to extract the feature from the input image.

- These kernels are designed to capture the hierarchical representations of the input image means the initial layers of CNN captures the low-lavel fetures likes edges and tectures, and while deeper layers captures the high lavel semantic features like objects parts and shapes.

- Both RPN and Fast R-CNN detector uses the same extracted hierarchical features. This results in a significant reduction in computing time and memory use because the computations carried out by these layers are employed for both tasks.

Region Proposal Network (RPN)

Previously R-CNN and Fast R-CNN models uses traditional Selective Search algorithm for generating region proposals. It runs on CPU So, it takes more time in computations. Faster R-CNN fixes these issues by introducing a convolutional-based network i.e. RPN, which reduces proposal time for each image to 10 ms from 2 seconds and improves feature representation by sharing layers with detection stages.

Region Proposal Network (RPN) is an essential component of Faster R-CNN. It is responsible for generating possible regions of interest (region proposals) in images that may contain objects. It uses the concept of attention mechanism in neural networks that instruct the subsequent Fast R-CNN detector where to look for objects in the image. The key components of the Region Proposal Network are as follows:

- Anchors boxes: Anchors are used to generate region proposals in the Faster R-CNN model. It uses a set of predefined anchor boxes with various scales and aspect ratios. These anchor boxes are placed at different positions on the feature maps.

An anchor box has two key parameters - Sliding Window approach: The RPN operates as a sliding window mechanism over the feature map obtained from the CNN backbone. It uses a small convolutional network (typically a 3×3 convolutional layer) to process the features within the receptive field of the sliding window. This convolutional operation produces scores indicating the likelihood of an object’s presence and regression values for adjusting the anchor boxes.

- Objectness Score: The objectness score represents the probability that a given anchor box contains an object of interest rather than being just background. In Faster R-CNN, the RPN predicts this score for each anchor. The objectness score reflects the confidence that the anchor corresponds to a meaningful object region. This score is used to classify anchors as either positive (object) or negative (background) during training.

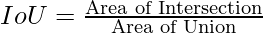

- IoU (Intersection over Union): Intersection over Union (IoU) is a metric used to measure the degree of overlap between two bounding boxes. It calculates the ratio of the area of overlap between the two boxes to the area of their union. Mathematically, it is represented as:

- Non-Maximum Suppression (NMS): NMS is used to remove redundancy and select the most accurate proposals, based on the objectness scores of overlapping proposals and keeps only the proposal with the highest score while suppressing the others.

The feature maps obtained from the CNN backbone are used by the RPN. On these feature maps, the RPN uses a sliding window approach with anchor boxes of varying scales and shapes to designate potential object positions. The network refines these anchor boxes throughout training to better match actual object positions and sizes. For each anchor, the RPN predicts two parameters.

- The probability of the anchor containing an object (“objectness Score”)..

- Adjustments to the anchor’s coordinates to match the actual object’s shape.

When a large number of region proposals are generated, many of them may overlap and correspond to the same object. Here the Non-Maximum Suppression (NMS) are used to ranks the anchor boxes based on their objectness probabilities and selects the top-N anchor boxes with the highest scores. NMS ensures that the final selected proposals are both accurate and non-overlapping. These selected anchor boxes are considered as possible region proposals.

.png)

Region Proposal Network (RPN)

Fast R-CNN detector

The Fast R-CNN detector is a critical component of the Faster R-CNN architecture, responsible for object detection within the region proposals suggested by the Region Proposal Network.

Let’s understand How Fast R-CNN detector operates in Faster R-CNN.

- Region of Interest (RoI) Pooling: The first step is to take the region proposals suggested by the RPN and apply RoI pooling. Region of Interest pooling is used to transform the RPN’s variable-sized region proposals into fixed-size feature maps that may be fed into the network’s subsequent layers. RoI pooling divides each region proposal into a grid of equal-sized cells then applying max pooling within each cell. This procedure generates a fixed-size feature map for each region proposal, which can be further processed by the network.

- Feature Extraction: The RoI-pooled feature maps are fed into the CNN backbone (the same one used in the RPN for feature extraction) to extract meaningful features that capture object-specific information. It draws hierarchical features from region proposals. These features retain spatial information while abstracting away low-level details, allowing the network to understand the proposed regions’ content.

- Fully Connected Layers: The RoI-pooled and feature-extracted regions then pass through a series of fully connected layers. These layers are responsible for object classification and bounding box regression tasks.

- Object Classification: The network predicts class probabilities for each region proposal, indicating the possibility that the proposal contains an object of a specific class. The classification is carried out by combining the features retrieved from the region proposal with the shared weights of the CNN backbone.

- Bounding Box Regression: In addition to class probabilities, The network predicts bounding box adjustments for each region proposal. These adjustments refine the position and size of the bounding box of the region proposal, making it more accurate and aligned with the actual object boundaries.

The first layer is a softmax layer of N+1 output parameters (N is the number of class labels and background ) that predicts the objects in the region proposal. The second layer is a bounding box regression layer that has 4* N output parameters. This layer regresses the bounding box location of the object in the image.

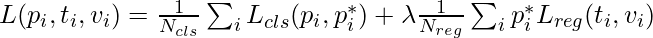

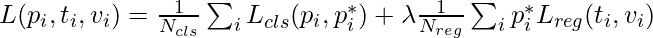

- Multi-task Loss Function: A multi-task loss function that combines classification and regression losses is used by the Fast R-CNN detector. The classification loss computes the difference between expected and true class probabilities. The regression loss computes the difference between expected and actual bounding box adjustments.

Where, is the number of RoIs used for classification.

is the number of RoIs used for classification. is the number of RoIs used for bounding box regression.

is the number of RoIs used for bounding box regression. is the predicted probability of classifying the ( i )-th RoI.

is the predicted probability of classifying the ( i )-th RoI. is the ground-truth indicator (1 or 0) for the ( i )-th RoI being a foreground or background object.

is the ground-truth indicator (1 or 0) for the ( i )-th RoI being a foreground or background object. represents the ground-truth bounding box parameters for the ( i )-th RoI.

represents the ground-truth bounding box parameters for the ( i )-th RoI. represents the predicted bounding box adjustments for the ( i )-th RoI.

represents the predicted bounding box adjustments for the ( i )-th RoI. is the classification loss function, often computed using cross-entropy loss.

is the classification loss function, often computed using cross-entropy loss. is the regression loss function, often computed using smooth L1 loss.

is the regression loss function, often computed using smooth L1 loss. is a balancing parameter that controls the trade-off between the two components of the loss.

is a balancing parameter that controls the trade-off between the two components of the loss.

- Post-Processing: After the network predicts class probabilities and bounding box changes, the final detection results are refined using a post-processing procedure. In this step, non-maximum suppression (NMS) is used to reduce redundant detections while retaining the most confident and non-overlapping detections.

Step by Step Faster R-CNN Process:

- Input and Shared Convolutional Layers:

- The RPN takes an image of any size as input and aims to produce rectangular object proposals based on the objectness score.

- The input image is processed using fully convolution layer, and because these compuations are shared with Both RPN and the Fast R-CNN object detection network, a common set of convolutional layers are used.

- Two fully convolution models are commonly used i.e:

- Zeiler and Fergus model (ZF): It has the 5 shareable convolutional layers.

- Simonyan and Zisserman model (VGG-16): It has the 13 shareable convolutional layers.

- Sliding Window Approach:

- The RPN generates proposals by sliding a small network over the convolutional feature map output obtained from the last shared convolutional layer.

- This small network operates on an n × n spatial window of the input convolutional feature map.

- Each sliding window is mapped to a lower-dimensional feature, with dimensions of :

- 256-d for Zeiler and Fergus model (ZF)

- 512-d for Simonyan and Zisserman model (VGG-16)

- Further it is followed by a Rectified Linear Unit (ReLU) activation.

- The small network works in a sliding-window manner across the feature map.

- The fully-connected layers are shared across all spatial locations due to the sliding-window operation.

- The sliding window architecture is effectively realized using an n × n convolutional layer, followed by two 1 × 1 convolutional layers for box regression and box classification.

- The sliding window, typically of size nxn (e.g., n = 3 for the sliding window leads to a large effective receptive field on the input image (171 pixels for ZF and 228 pixels for VGG)), is moved across the feature map.

- Anchors Boxes:

- The sliding window, typically of size nxn (e.g., n = 3 for the sliding window leads to a large effective receptive field on the input image (171 pixels for ZF and 228 pixels for VGG)), is moved across the feature map. For each window position, K region proposals are generated. Each proposal is defined by an anchor box, which is parameterized by scale and aspect ratio.

- Multiple anchor boxes are created by varying these parameters, resulting in different scales and aspect ratios. This creates a set of anchor boxes, usually K=9, allowing the model to consider various object sizes and shapes. These anchor variations enable the model to handle scale invariance and share features between the RPN and Fast R-CNN.

- Anchors enable the use of a single image at a single scale while still achieving scale-invariant object detection. This eliminates the need for multiple images or filters for handling different scales.

- Sibling Fully-Connected Layers:

For each generated region proposal, a feature vector is extracted. This vector has a length of 256 (for ZF net) or 512 (for VGG-16 net) and is then processed by two sibling fully-connected (FC) layers:- The lower-dimensional feature extracted from the sliding window is fed into two sibling fully-connected layers

- Box-Classification Layer (cls):It Predicts an objectness score for the proposed region, indicating whether the region contains an object or is background.The “cls” FC layer is a binary classifier that assigns an objectness score to each region proposal. It determines whether the proposal contains an object or is part of the background.

- Box-Regression Layer (reg): It predict adjustments for the bounding box of the proposed region.The “reg” FC layer returns a 4-D vector that defines the bounding box of the region proposal.

- The “cls” FC layer produces two outputs: one for classifying the region as background and another for classifying the region as an object. The objectness score assigned to each anchor helps generate the classification label.

End-to-End Faster R-CNN Training

The Region Proposal Network (RPN) is trained end-to-end using backpropagation and stochastic gradient descent (SGD). This means that the entire network, including the newly added RPN layers and the shared convolutional layers, is optimized together to minimize the loss function.

- Image-Centric Sampling: The training approach employed is “image-centric” sampling, in which each mini-batch is derived from a single image. This image contains both positive (object-containing) and negative (background) example anchors. Instead of optimizing for the loss function of all anchors, the network randomly picks 256 anchors from the image to calculate the loss for the mini-batch. The sampled positive and negative anchors are balanced at a ratio of up to 1:1.

- Positive and Negative Anchors Ratio:To overcome the potential bias towards negative samples, the training ensures that each mini-batch has a balanced mix of positive and negative examples. If an image has fewer than 128 positive samples, negative samples are added to the mini-batch to keep the correct ratio.

- Layer Initialization: New layers added to the architecture are initialized by drawing weights from a Gaussian distribution with a mean of zero and a standard deviation of 0.01. This random initialization is applied to the layers specific to the RPN. On the other hand, existing layers (shared convolutional layers) are initialized using weights pretrained on the ImageNet classification task, following standard practice.

- Learning Rate and Momentum: On the PASCAL VOC dataset, the learning rate is set at 0.001 for the first 60,000 mini-batches and is dropped to 0.0001 for the succeeding 20,000 mini-batches. A momentum value of 0.9 is employed, which determines how the optimization process updates the parameters of the model. A weight decay of 0.0005 is also used to control overfitting.

Results and Conclusion

- Since the bottleneck of Fast R-CNN architecture is region proposal generation with the selective search. Faster R-CNN replaced it with its own Region Proposal Network. This Region proposal network is faster as compared to selective and it also improves the region proposal generation model while training. This also helps us reduce the overall detection time as compared to fast R-CNN (0.2 seconds with Faster R-CNN (VGG-16 network) as compared to 2.3 in Fast R-CNN).

- Faster R-CNN (with RPN and VGG shared) when trained with COCO, VOC 2007 and VOC 2012 dataset generates mAP of 78.8% against 70% in Fast R-CNN on VOC 2007 test dataset)

- Region Proposal Network (RPN) when compared to selective search, also contributed marginally to the improvement of mAP.

Like Article

Suggest improvement

Share your thoughts in the comments

Please Login to comment...