Cross Site Scripting (XSS) Prevention Techniques

Last Updated :

02 Oct, 2020

XSS or Cross-Site Scripting is a web application vulnerability that allows an attacker to inject vulnerable JavaScript content into a website. An attacker exploits this by injecting on websites that doesn’t or poorly sanitizes user-controlled content. By injecting vulnerable content a user can perform (but not limited to),

- Cookie Stealing.

- Defacing a website.

- Bypassing CSRF Protection etc.,

There are multiple ways by which a web application can protect itself from Cross-Site Scripting issues. Some of them include,

- Blacklist filtering.

- Whitelist filtering.

- Contextual Encoding.

- Input Validation.

- Content Security Policy.

1. Blacklist filtering

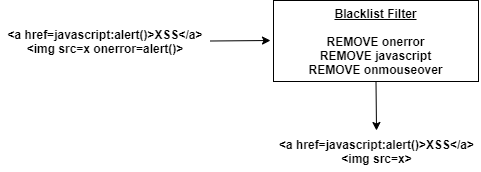

It is easy to implement a filtering technique that protects the website from XSS issues only partially. It works based on a known list of finite XSS vectors. For example, most XSS vectors use event listener attributes such as onerror, onmouseover, onkeypress etc., Using this fact, users given HTML attributes can be parsed and these event listeners attributes. This will mitigate a finite set of XSS vectors such as <img src=x onerror=alert()>.

For vectors like <a href=”javascript:alert()”>XSS</a>, one may remove javascript:, data:, vbscript: schemes from user given HTML.

Advantages:

- These filters are easy to implement in a web application.

- Almost zero risk of false positives of safe user content being filtered by these filter

Disadvantages:

But this filtering can be easily bypassed as XSS vectors are not finite and cannot be maintained so. Here is the list of some valid bypasses of this filter. This filtering doesn’t protect the website completely.

- <a href=”jAvAscRipt:alert()”>XSS</a>

- <a href=”jAvAs cRipt:alert()”>XSS</a>

- <a href=”jAvAscRipt:prompt()”>XSS</a>

2. Whitelist Filtering

Whitelist filtering is the opposite of blacklist based filtering. Instead of listing out unsafe attributes and sanitizing user HTML with this list, whitelist filtering lists out a set of set HTML tags and attributes. Entities that are known to be sure safe are maintained and everything else will be filtered out.

This reduces XSS possibilities to the maximum extent and opens up XSS only when there is a loophole in the filter itself that treats some unsafe entities as safe. This filtering can be done both in the Client and server-side. Whitelist filtering is the most commonly used filter in modern web applications.

Advantages:

- Reduces XSS possibilities to a very good extent.

- Some whitelist filters like the Antisamy filter rewrite User content with Safe rules. These causes rewriting of HTML content with strict standards of HTML language.

Disadvantages:

More often this works by accepting unsafe or unsanitized HTML, parses them and constructs a safe HTML, and responds back to the user. This is performance intensive. Usage of these filters heavily may have a hidden performance impact on your modern web application.

3. Contextual Encoding

The other common mitigation technique is to consider all user given data as textual data and not HTML content, even if it is an HTML content. This can be done performing HTML entity encoding on user data. Encoding <h1>test</h1> may get converted to <pre><test> test </></pre> The browser will then parse this correctly and render <h1>test</h1> as text instead of rendering it as h1 HTML tag.

Advantages:

If done correctly, contextual encoding eliminates XSS risk completely.

Disadvantages:

It treats all user data as unsafe. Thus, irrespective of the user data being safe or unsafe, all HTML content will be encoded and will be rendered as plain text.

4. Input Validation

In the Input validation technique, a regular expression is applied for every request parameter data i.e., user-generated content. Only if the content passes through a safe regular expression, it is then allowed. Otherwise, the request will be failed on the server-side with 400 response code.

Advantages:

Input validation not only reduces XSS but protects almost all vulnerabilities that may arise due to trusting user content.

Disadvantages:

- It might be possible to mitigate an XSS in the phone number field by having a numeric regular expression validation but for a name field, it might not be possible as names can be in multiple languages and can have non-ASCII characters in Greek or Latin alphabets.

- Regular expression testing is performance intensive. All parameters in all requests to a server must be matched against a regular expression.

5. Content Security Policy

The modern browser allows using of CSP or Content Security Policy Headers. With these headers, one can specify a list of domains only from which JavaScript content can be loaded. If the user tries to add a vulnerable JavaScript, CSP headers will block the request.

Advantages:

CSP is the most advanced form of XSS protection mechanism. It eliminates untrusted sources to enter data to websites in any form.

Disadvantages:

To have CSP headers defined, websites must not use inline JavaScript code. JS should be externalized and referred to in script tags. These set of domains that loads static content must be whitelisted in CSP headers.

Encoding Vs Filtering –

One common question on mitigating XSS is deciding whether to encode or filter(sanitize) user data. When user-driven content must be rendered as HTML but if javascript shouldn’t execute, the content must pass through a filter. If user data need not be rendered as HTML and if textual rendering would suffice, then it is recommended to HTML encode characters in user data.

Recommended Mitigation Technique For XSS –

Blacklist filter has been exploited multiple times and owing to continuously growing HTML content, it is always unsafe to use Blacklist filter. Though proper input validation and CSP headers might mitigate XSS to a good extent, it is always recommended to entity encode or filter based on whitelist policy based on the use case. Input validation and CSP headers can be added as an extra layer of protection.

Reference – https://developer.mozilla.org/en-US/docs/Web/HTTP/CSP

Like Article

Suggest improvement

Share your thoughts in the comments

Please Login to comment...