The productivity, if measured only in terms of lines of code per unit of time, can vary a lot depending on the complexity of the system to be developed. A programmer will produce a lesser amount of code for highly complex system programs, as compared to a simple application program. Similarly, complexity has a great impact on the cost of maintaining a program. To quantify complexity beyond the fuzzy notion of the ease with which a program can be constructed or comprehended, some metrics to measure the complexity of a program are needed.

A complexity measure is a cyclomatic complexity in which the complexity of a module is the number of independent cycles in the flow graph of the module. A number of metrics have been proposed for quantifying the complexity of a program, and studies have been done to correlate the complexity with maintenance effort. In this article, we will discuss a few complexity measures. Most of these have been proposed in the context of programs, but they can be applied or adapted for detailed design as well.

Size Measures: A complexity measure tries to capture the level of difficulty in understanding a module. In other words, it tries to quantify a cognitive aspect of a program. It is well known that, in general, the larger a module, the more difficult it is to comprehend. Hence, the size of a module can be taken as a simple measure of the complexity of the module. It can be seen that, on average, as the size of the module increases, the number of decisions in it is likely to increase. It means on average, as the size increases, the cyclomatic complexity also increases. Though it is clearly possible that two programs of the same size have substantially different complexities, in general, size is quite strongly related to some complexity measures.

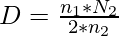

Halstead’s Measure: Halstead also proposed a number of other measures based on his software science. Some of these can be considered complexity measures. A number of variables have been defined to explain this. These are n1 (number of unique operators), n2 (number of unique operands), N1 (total frequency of operators), and N2 (total frequency of operands). As any program must have at least two operators: one for function call and one for end of the statement—the ratio n1/2 can be considered the relative level of difficulty due to the larger number of operators in the program. The ratio N2/n2 represents the average number of times an operand is used. In a program in which variables are changed more frequently, this ratio will be larger. As such programs are harder to understand, ease of reading or writing is defined as:

Halstead’s Complexity Measure focused on the internal complexity of a module, as does McCabe’s complexity measure. Thus, the complexity of the module’s connection with its environment is not given much importance. In Halstead’s measure, a module’s connection with its environment is reflected in terms of operands and operators. A call to another module is considered an operator, and all the parameters are considered operands of this operator.

Live Variables: In a computer program, a typical assignment statement uses and modifies only a few variables. However, in general, the statements have a much larger context i.e., to construct or understand a statement, a programmer must keep track of a number of variables, other than those directly used in the statement. For a statement, such data items are called live variables. Intuitively, the more live variables for statements, the harder it will be to understand a program. Hence, the concept of live variables can be used as a metric for program complexity.

First, let us define live variables more precisely. A variable is considered live from its first to its last reference within a module, including all statements between the first and the last statement where the variable is referenced. Using this definition, the set of live variables for each statement can be computed easily by analysis of the module’s code. The procedure of determining the live variables can easily be automated.

For a statement, the number of live variables represents the degree of difficulty of the statement. This can be extended to the entire module by defining the average number of live variables. The average number of live variables is the sum of the count of live variables (for all executable statements) divided by the number of executable statements. This is a complexity measure for the module.

Live variables are defined from the point of view of data usage. The logic of a module is not explicitly included. The logic is used only to determine the first and last statement of reference for a variable. Hence, this concept of complexity is quite different from cyclomatic complexity, which is based entirely on the logic and considers data as secondary.

Another data usage-oriented concept is span, the number of statements between two successive uses of a variable. If a variable is referenced at N different places in a module, then for that variable there are (N – 1) spans. The average span size is the average number of executable statements between two successive references of a variable. A large span implies that the reader of the program has to remember a definition of a variable for a larger period of time (or for more statements). In other words, the span can be considered a complexity measure; the larger the span, the more complex the module.

Knot Count: A method for quantifying complexity based on the locations of the control transfers of the program has been proposed. It was designed largely for FORTRAN programs, where an explicit transfer of control is shown by the use of goto statements. A programmer, to understand a given program, typically draws arrows from the point of control transfer to its destination, helping to create a mental picture of the program and the control transfers in it. According to this metric, the more intertwined these arrows become, the more complex the program. This notion is captured in the concept of a knot.

A knot is essentially the intersection of two such control transfer arrows. If each statement in the program is written on a separate line, this notion can be formalized as follows. A jump from the line a to line b is represented by the pair(a, b). Two jumps (a, b) and (p, q) give rise to a knot if either min (a, b) < min (p, q) < max (a, b) and max (p, q) > max (a, b) or min (a, b) < max (p, qa) < max (a, b) and min (p, q) < min (a, b).

Problems can arise while determining the knot count of programs using structured constructs. One method is to convert such a program into one that explicitly shows control transfers and then compute the knot count. The basic scheme can be generalized to flow graphs, though with flow graphs only bounds can be obtained.

Topological Complexity:

A complexity measure that is sensitive to the nesting of structures has been proposed. Like cyclomatic complexity, it is based on the flow graph of a module or program. The complexity of a program is considered it’s maximal intersect number min.

To compute the maximal intersect, a flow graph is converted into a strongly connected graph (by drawing an arrow from the terminal node to the initial node). A strongly connected graph divides the graph into a finite number of regions. The number of regions is (edges – nodes + 2). If we draw a line that enters each region exactly once, then the number of times this line intersects the arcs in the graph is the maximal intersect min, which is taken to be the complexity of the program.

Like Article

Suggest improvement

Share your thoughts in the comments

Please Login to comment...